Littering is the act of disposing of waste materials in an improper way, such as throwing them on the ground or in the water. Littering causes many problems for the environment and the living beings that depend on it (Gholami et al., Reference Gholami, Torkashvand, Rezaei Kalantari, Godini, Jonidi Jafari and Farzadkia2020; Irving, Reference Irving2023; Keep Britain Tidy, 2023). Littering harms wildlife, pollutes the water and soil, increases crime, lowers property values and requires enormous resources to clean up. Littering also creates an unsightly and unhealthy environment for humans and animals alike. It is therefore important to reduce, reuse and recycle our waste, and dispose of it properly. The issue of littering is part of a broader concern about urban environmental quality, which significantly impacts citizens’ behaviour and well-being (e.g., Karimi et al., Reference Karimi, Sajadzadeh and Aram2022; Oyola et al., Reference Oyola, Carbone, Timonen, Torkmahalleh and Lindén2022).

Littering is a complex behaviour resistant to change due to its dependence on various societal actors, including citizens’ commitment to reduce littering and government bodies’ responsibility for waste management (Kolodko and Read, Reference Kolodko and Read2018). In the UK, local councils are primarily responsible for litter clean-up, bin maintenance and addressing ‘fly-tipping’—the unauthorized dumping of waste in rural areas (Department for Environment, Food & Rural Affairs, 2022).

In this paper, we investigate Littergram, a digital intervention to combat littering, and test whether behavioural insights can be used to increase the effectiveness of this intervention. The intervention is based on email communication towards users of Littergram, a smartphone app based on the joint concepts of ‘naming and shaming’ and information provision to encourage councils to respond more effectively to unsightly litter. The app utilizes the concepts of ‘naming and shaming’ and information provision to encourage more effective council responses to litter. Users upload geotagged images of litter, which are then shared with the responsible local council, potentially creating public pressure for clean-up. Because location information is based on GPS data, it is very accurate, making it easier for councils to know where their involvement is needed. Using the app is also more effective rather than answering online. Moreover, by making their posts public users can exert pressure on councils to clean up litter that hasn’t been cleaned up.

Underlying Littergram is the concept of social norms, which are known to play a large role in shaping people’s behaviours, including littering (Cialdini et al., Reference Cialdini, Reno and Kallgren1990; Ningrum et al., Reference Ningrum, Vibiyanti, Hidayati, Putri, Katherina and Seftiani2021). People look to others for cues as to what is appropriate in a given context. These cues come not only from seeing a behaviour as it happens, e.g., seeing someone drop a wrapper onto the ground, but also through observing the effects of past behaviours (Finnie, Reference Finnie1973; Geller et al., Reference Geller, Witmer and Tuso1977; Krauss et al., Reference Krauss, Freedman and Whitcup1978; Reiter and Samuel, Reference Reiter and Samuel1980; Dur and Vollaard, Reference Dur and Vollaard2013). Seeing a park filled with litter can be a signal that littering is accepted in a particular location. Similarly, seeing a clean park, where all litter has been properly disposed of, is an indication that using bins is the norm and that, just like others, one should use bins too. Cialdini et al. (Reference Cialdini, Reno and Kallgren1990) were able to estimate this impact more precisely, showing that in locations where two or less pieces of litter were visible, the social norm was to not litter; whereas in locations where three or more pieces of litter were visible, the majority of people littered. So, Littergram users have the potential to create a new norm – of clean streets, parks and other public spaces – which could help further reinforce the desired change (Cialdini et al., Reference Cialdini, Reno and Kallgren1990).

The aim of this study was to use behavioural insights to encourage Littergram users to post pictures of litter by systematically modifying the Littergram newsletter by drawing on behavioural science, and, in particular, adopting the Behaviour Change Wheel (BCW) approach (Michie et al., Reference Michie, Atkins and West2014). A further aim was to outline a general methodology of how to approach such a task and how to report its results. To our knowledge, these objectives make this study one of the first to use the BCW Wheel in the context of environmental decision-making and in emails. It is also one of the first to follow the framework in its entirety and to report results of such an intervention. This systematic procedure produced an effective intervention, increasing the use of Littergram, and demonstrating the benefits of applying a holistic, comprehensive and systematic behaviour change theory in promoting pro-environmental actions.

Digital interventions to change behaviours

Since we were using email to communicate with Littergram users, we start with a brief review of existing literature on the use of digital media, particularly email, to alter human behaviours. With 85% of the British population using emails (Statista, 2020), email newsletters can be a useful, practical and cheap channel to deliver behaviour change interventions to diverse populations. Previous research shows that email-based interventions can be effective in generating behaviour change (e.g., Neff and Fry, Reference Neff and Fry2009). However, to date, few studies used a more comprehensive and theory-based approach, in which several Behaviour Change Techniques (BCTs) are used in a systematic way, as was the case in this study.

We conducted a Scopus search for publications that used the words ‘behavioural’, ‘intervention’ and ‘email’ or ‘newsletter’ in the title, abstract or as a keyword. The majority of interventions we identified used email in a supportive role, in combination with other communication channels or BCTs (e.g., Block et al., Reference Block, Sternfeld, Block, Block, Norris, Hopkins and Clancy2008; Houston et al., Reference Houston, Sadasivam, Allison, Ash, Ray, English and Ford2015; Ngamruengphong et al., Reference Ngamruengphong, Horsley-Silva, Hines, Pungpapong, Patel and Keaveny2015; Dudziński et al., Reference Dudziński, Bhatia, Mi, Isselbacher, Picard and Weiner2016; Hutchesson et al., Reference Hutchesson, Tan, Morgan, Callister and Collins2016; Leone et al., Reference Leone, Allicock, Pignone, Walsh, Johnson, Armstrong-Brown and Campbell2016; Levenson et al., Reference Levenson, Miller, Hafer, Reidell, Buysse and Franzen2016; Schweier et al., Reference Schweier, Romppel, Richter and Grande2016; Zwickert et al., Reference Zwickert, Rieger, Swinbourne, Manns, McAulay, Gibson and Caterson2016; Brendryen et al., Reference Brendryen, Johansen, Duckert and Nesvåg2017; Limaye et al., Reference Limaye, Kumaran, Joglekar, Bhat, Kulkarni, Nanivadekar and Yajnik2017; Skolarus et al., Reference Skolarus, Metreger, Hwang, Kim, Grubb, Gingrich and Hawley2017; Van Dijk et al., Reference Van Dijk, Oostingh, Koster, Willemsen, Laven and Steegers-Theunissen2017; Young et al., Reference Young, Russell, Robinson and Barkemeyer2017, Reference Young, Russell, Robinson and Chintakayala2018; Griffin et al., Reference Griffin, Struempler, Funderburk, Parmer, Tran and Wadsworth2018). The studies that used only emails typically relied on one of three BCTs: feedback (Carrico and Riemer, Reference Carrico and Riemer2011; Chambliss et al., Reference Chambliss, Huber, Finley, McDoniel, Kitzman-Ulrich and Wilkinson2011; Moreira et al., Reference Moreira, Oskrochi and Foxcroft2012; Dennis and Horn, Reference Dennis and Horn2014; Emeakaroha et al., Reference Emeakaroha, Ang, Yan and Hopthrow2014; Kramer and Kowatsch, Reference Kramer and Kowatsch2017; Leung et al., Reference Leung, Zheng, Sandhu, Day, Li and Baysari2017; Tavarez et al., Reference Tavarez, Ayers, Jeong, Coombs, Thompson and Hickey2017), reminders (e.g., Greaney et al., Reference Greaney, Sprunck-Harrild, Bennett, Puleo, Haines, Viswanath and Emmons2012; Murphy and DiPietro, Reference Murphy and DiPietro2012; Robinson et al., Reference Robinson, Guevara, Gaber, Clayman, Kwasny, Friedewald and Gordon2014; Abel et al., Reference Abel, Lee, Loglisci, Righter, Hipper and Cheskin2015; Robertson, Reference Robertson2016; Bradley et al., Reference Bradley, Tieman, Woodman and Phillips2017; Petrella et al., Reference Petrella, Gill, Zou, De, Riggin, Bartol and Bunn2017) or information provision/education (Plotnikoff et al., Reference Plotnikoff, Pickering, McCargar, Loucaides and Hugo2010; Poddar et al., Reference Poddar, Hosig, Anderson-Bill, Nickols-Richardson and Duncan2012; Morgan et al., Reference Morgan, Mackinnon and Jorm2013; Kattelmann et al., Reference Kattelmann, Bredbenner, White, Greene, Hoerr, Kidd and Brown2014; Kothe & Mullan, Reference Kothe and Mullan2014a; Kothe & Mullan, Reference Kothe and Mullan2014b; Schneider et al., Reference Schneider, Murphy, Ferrara, Oleski, Panza, Savage and Lemon2015). Several other publications compared the impact of generic vs personalized emails, showing that personalized messages could have a greater impact than generic ones (Hageman et al., Reference Hageman, Noble Walker and Pullen2005; Walker et al., Reference Walker, Pullen, Boeckner, Hageman, Hertzog, Oberdorfer and Rutledge2009, Reference Walker, Pullen, Hageman, Boeckner, Hertzog, Oberdorfer and Rutledge2010; Yates et al., Reference Yates, Pullen, Santo, Boeckner, Hageman, Dizona and Walker2012; Short et al., Reference Short, Rebar and Vandelanotte2015b).

One reason for the reported mixed effectiveness of such interventions is that they do not systematically select BCTs but rather rely on intuition. Previous research suggests that such interventions are more effective when they rely on a systematic and theory-driven approach (Albarracín et al., Reference Albarracín, Gillette, Earl, Glasman, Durantini and Ho2005; Noar and Zimmerman, Reference Noar and Zimmerman2005; Abraham et al., Reference Abraham, Kelly, West and Michie2009). Indeed, all of the few published email-based interventions that did use a theory-informed approach were effective in generating a behaviour change. For example, Parrott et al. (Reference Parrott, Tennant, Olejnik and Poudevigne2008) designed a successful 3-week study in which positively and negatively framed emails were developed using the Theory of Planned Behaviour (Ajzen, Reference Ajzen, Kuhl and Beckmann1985, Reference Ajzen1991; Ajzen and Madden, Reference Ajzen and Madden1986), where the positively framed emails were most effective. Similarly, Blake et al. (Reference Blake, Suggs, Coman, Aguirre and Batt2017) showed that this specific theory-related emails had a greater impact on behaviour than standard text messages. Other theory-informed interventions, such as one using messages based on a habit framework (Rompotis et al., Reference Rompotis, Grove and Byrne2014) and another that used a cognitive behavioural therapy approach (Trockel et al., Reference Trockel, Manber, Chang, Thurston and Tailor2011), have also proved to be effective in changing behaviours.

The key insight that comes from this literature is that while digital channels such as emails are ubiquitous and simple to use, their effectiveness is not guaranteed. Taking an empirically and theoretically grounded approach to developing interventions has, however, generally proved effective. In our study, we apply the most comprehensive theoretical framework, the BCW, to build an email intervention for the problem of littering. The BCW draws on insights from an extremely wide set of empirically grounded theories, essentially all theories of behaviour and behaviour change frameworks developed in psychology so far, and thus provides researchers with explicit guidance on how to use them in practice, and on the pitfalls that might arise.

Method

This study was conducted in collaboration with Littergram (http://www.Littergram.co.uk/) and received ethics approval from the Humanities and Social Sciences Research Ethics Committee at University of Warwick.

In our development and implementation of behavioural interventions, we undertook to apply with as much rigour as possible the BCW method as outlined by Michie et al. (Reference Michie, Atkins and West2014). The BCW method is a highly structured process for identifying the barriers to and facilitators of behaviour change, at the core of which is the COM-B (Capability, Opportunity, Motivation – Behaviour) model. This model facilitates a systematic diagnosis of behavioural causes, which are then matched with intervention strategies. These strategies are organized into three distinct but interrelated components: Policies, Intervention Functions, and BCTs. Intervention Functions define the essential roles or actions of interventions, such as education or enabling, aiming to modify behaviour through various means. Policies are overarching strategies or rules that support intervention delivery, creating an environment conducive for the desired behaviour change. Our primary focus was on BCTs, which are the precise strategies or practices designed to directly influence behaviour change. BCTs are diverse and include, but are not limited to, approaches such as nudges and boosts (Grüne-Yanoff et al., Reference Grüne-Yanoff, Marchionni and Feufel2018). This comprehensive framework ensures a holistic approach to behaviour change, allowing for the tailored design of interventions based on identified behavioural drivers.

To design, develop and implement our interventions we closely follow (subject to real-world constraints outlined later) the steps of the BCW method as described by Michie et al. (Reference Michie, Atkins and West2014). First, we identified the target behaviour (Step 1) and then designed a survey based on the theoretical domains framework (TDF; Michie et al., Reference Michie, Atkins and West2014) to identify key mediators of the selected target behaviour (Step 2). Next, the survey (Study 1) was conducted and, based on its results and the BCW method, the best BCTs were identified for the intervention part (Steps 3 to 7). Afterwards, the intervention (Step 8/Study 2) was designed and implemented. Finally, a follow-up investigation (Step 9/Study 3) was conducted to evaluate which of the BCTs used in the intervention had the biggest impact on behaviour, and also to evaluate the effectiveness of the intervention (Step 10).

Step 1: Problem definition

The first step of the BCW method is to define a problem in behavioural terms and to select and specify a target behaviour. This is done by answering a series of questions. We defined the target behaviour with these questions and answers:

Who needs to perform the behaviour? Littergram users who are subscribed to the Littergram newsletter.

What do they need to do differently? They need to post at least three pictures of litter on Littergram every weekFootnote 1 of the intervention.

When do they need to do it? Anytime they see litter.

Where do they need to do it? On Littergram.

How often do they need to do it? At least three times a week during the 6-week intervention period.

Who else is needed to do it? No one.

Step 2: TDF questionnaire development and distribution

To identify barriers and enablers of posting on Littergram, we administered a questionnaire based on the TDF and statements outlined for each of the fourteen domains in Huijg et al. (Reference Huijg, Gebhardt, Crone, Dusseldorp and Presseau2014; see Table 1 in the Appendix).

The survey was set-up in Qualtrics and distributed to Littergram users via a newsletter. The first of the two emails with a link to the survey was sent on 20 December 2016 to 8112 people (see Figure 1 in Appendix for previews of the two emails sent). Of those, 2389 (29.45%) opened the email and 266 (3.27% of all recipients and 11.13% of those who opened the email) clicked on the link to the survey. The second email was sent on 23 December to 8025 people. Of those, 1957 (24.39%) opened the email and 100 (1.24% of all recipients and 5.21% of those who opened the email) clicked on the link. We couldn’t collect data on ‘overlaps’, i.e., those opened both emails. Therefore, we cannot say how many of the 1957 people who opened the second email also opened the first. Overall, 247 people filled out the survey (mean age = 52.86, 27.8% female). Forty-five of those only answered the first couple of questions, and therefore their data were excluded from the dataset. The subsequent analyses are based on responses from 202 participants.

Figure 1. Intervention timeline and structure.

Step 3: Identifying key domains (Study 1)

Procedure

Participants were first told they were going to answer questions about posting pictures of litter on Littergram. They were provided with a definition of what was meant by ‘anti-littering messages’. Specifically, they read:

By “litter” we mean any waste products that have been improperly disposed of at an inappropriate location. Litter can be as small as a cigarette butt or a chewing gum dropped on the ground; or as big as bin bags left behind, an overfilled bin, or fly-tipping.

Participants next indicated whether they had ever posted on Littergram and, if they answered ‘yes’, they stated how many times they had posted a picture in the previous 7 days, with possible answers ranging from zero to five or more times. Next, they saw the 33 TDF statements (Table 1 in Appendix), shown in a random order, and indicated their agreement with each a 7-point scale, from ‘Strongly disagree’ to ‘Strongly agree’. They then indicated their intent to post on Littergram in the next 7 days, with answers coded on a 5-point scale from ‘Zero’ to ‘Five times or more’. Finally, they provided demographic data (gender, age, location).

Table 1. Behaviour Change Wheel steps as implemented in the Littergram research project

Results

Internal consistency of TDF scales. To keep the survey manageable, we shortened the initial survey by removing items that did not correlate with others in that domain. The full set of items we started with is shown in Appendix Table 1. Five domains in the initial survey (Memory, attention and decision processes; Behavioural regulation; Environmental context and resources; Goals; Reinforcement) were composed of three statements and one domain (Beliefs about consequences) was composed of four statements. For domains with multiple statements we calculated Cronbach’s alpha to measure internal consistency (see Table 2). For every domain with three or more statements, we removed an item based on the results of the analysis to create a briefer scale while maximizing internal consistency. Removed items are marked with an asterisk in Appendix Table 1. All subsequent analyses are based on this shorter version of the TDF survey.

Table 2. Cronbach’s alphas for Littergram TDF items

Key domains. Using a backwards exploratory method, a series of multiple regressions was conducted, using the Intention scale as a predictor for whether a Littergram user would post pictures in the App. Based on the results, a model with three BCTs (predictors) was selected for the subsequent intervention development. The model was significant (F (9,202) =25.94; p = 0.000), with an R 2 of 0.282. The three identified domains were Behavioural regulation (Standardized β = 0.203; p = 0.004); Emotions (Standardized β = 0.117; p = 0.000); and Beliefs about consequences (Standardized β = 0.133; p = 0.049).

Step 4: Identifying intervention functions

The BCW lists nine types (or ‘functions’) of interventions (education, persuasion, incentivization, coercion, training, restriction, modelling and enablement) and the next step of the framework is to identify which to use in the intervention. Since this intervention was based on newsletters, we chose education (increasing knowledge or understanding) and enablement (increasing means/reducing barriers) as intervention functions. Specifically, messages related to Beliefs about consequences were going to address the education function, whereas messages referring to Emotions and Behavioural regulation were going to address the enablement function.

Step 5: Identifying policy domains

The mode of delivery of the intervention (i.e., a series of newsletters) meant that of the available policy domains (Communication/Marketing, Guidelines, Fiscal measures, Regulation, Legislation, Environmental/Social planning and Service provision) we would be working with the first one – Communication.

Step 6: Selection of BCTs

The BCW contains the BCTs Taxonomy which describes 93 theoretically informed and replicable techniques. The BCW provides links between specific techniques and the domains they are best suited to influence (Cane et al., Reference Cane, Richardson, Johnston, Ladha and Michie2015). Using these links, we developed a multicomponent intervention to influence the target domains. Considering the mode of delivery, we designed an intervention that used three of those, one for each key domain: Social and environmental consequences; (Monitoring of) emotional consequences; Self-monitoring of behaviour, as described in Table 3 and Step 8.

Table 3. Littergram study behaviour change techniques (BCTs)

Note that in this trial, we were constrained to using only one BCT per theoretical domain (barrier/enabler) due to the complexity of implementing too many techniques simultaneously. Given that participants received only three emails per week – each addressing a different domain barrier – it made practical sense to employ one technique per factor. Overloading participants with multiple, differing techniques could have resulted in cognitive overload, potentially reducing engagement. By focusing on a single BCT for each domain, we aimed to create a sense of familiarity with the content. To narrow down the number of BCTs, we followed the APEASE criteria from the BCW methodology: Acceptability, Practicability, Effectiveness, Affordability, Side-effects/Safety, and Equity. We considered the cost to the app company (Practicability and Affordability), the likely effectiveness of the selected techniques (guided by the BCW handbook’s most frequently used BCTs), and how participants might respond to an excessive number of BCTs (Acceptability). This trial represents an initial step in demonstrating the utility of the BCW approach, and future research should aim to test additional BCTs and explore their differential impacts on behaviour (where the impact of these specific BCTs could be compared more deeply against alternatives, particularly in digital campaigns).

Step 7: Identifying mode of delivery

The BCW includes six modes of delivery for Communication—based interventions – face-to-face modes and distance modes (e.g., broadcast, digital and outdoor and print media). Our chosen mode of delivery was digital media, and specifically email.

Step 8: Intervention (Study 2)

Steps 1–7 lead to the development of a behaviour change intervention, which consisted of three BCTs and which addressed three barriers to behaviour change identified in the survey.

Littergram newsletter subscribers were randomly allocated to two experimental groups. Both groups received the same emails, with the only difference being time delay, i.e., the second group started receiving emails two weeks later than the first. The intervention started on a Friday (16th of June for Group 1 and 30th of June for Group 2) and ran for 6 weeks. See Table A2 in the Appendix for a detailed schedule.

On every Friday during the intervention period, Littergram users received an email with the Social and environmental consequences BCT. We chose Friday as the day to send emails with reminders of consequences of (not) posting, assuming weekends were the time when people were more likely to be outdoors and to use the App. There were three types of message, each written with a positive (gain) and a negative (loss) frame (see Figures A1–A3 in the Appendix for examples of the six emails). The order in which the emails were sent was randomized.

After the weekends, on every Tuesday, participants received emails with the second of the three BCTs – Monitoring of emotional consequences (see Figure A4). In these emails, recipients evaluated how they felt – on a 3-point emoji scale with a frowning, neutral and happy face – about their activity on Littergram in the previous 7 days. Depending on whether the person had or hadn’t posted in this period, the emails either asked how they felt about posting (if they did post) or how they would feel had they posted (if they hadn’t). We asked the non-posting group about how they would have felt about posting, rather than how they felt about not posting, to make the two scales concern the same action.

Two days later, on Thursdays, everyone received an email along with their progress update and an encouragement to post at least three times in the upcoming week. Specifically, every person was reminded how many times they had posted on Littergram in the previous 7 days, which was how we delivered the third BCT, Self-monitoring of behaviour (see Figure A5 in Appendix).

The next day, on a Friday, participants received another Friday email with information about positive consequences of posting on Littergram or negative consequences of not posting, and so on, for 6 weeks and a total of 18 emails.Footnote 2 Figure 1 shows the intervention timeline and structure.

Results

Data limitations

Our aim was to conduct a detailed evaluation of the intervention, in which we would estimate not only the impact of the intervention as a whole but also of its individual components, including variables such as which BCT and subtype (positive vs. negative frame of consequences used in the Friday emails) had the biggest impact on behaviour. However, despite our best attempts at designing the study methodology carefully and agreements with Littergram on the type of data to be recorded, this was not possible.

First, we were not able to obtain data on which emoji study participants clicked on, making a more detailed analysis of the impact of BCT 2 impossible. Second, we could not obtain precise information regarding the number of active Littergram users on each day, resulting in the analysis being conducted on the average number of posts per day, not per user. Most importantly, however, there was a discrepancy between email sent dates and open dates. The more detailed analysis of the intervention relied on the assumption that people would read emails on the days they received them. However, some people opened emails with a delay and/or several emails at a time. Hence, it wasn’t possible to identify which BCT impacted a person’s behaviour on a particular day. For example, if a person opened both a Tuesday and a Thursday email on Friday, there was no way to determine whether her behaviour was impacted by BCT 2 or 3. Therefore, the subsequent analysis treats the intervention as a whole.

Overall, 9078 Littergram users received intervention emails. The average email open rate was 25.70%. We conducted an ‘intention to treat’ analysis (e.g., Gupta, Reference Gupta2011) on all data, not only on the opened emails.

To evaluate impact, we conducted a trend analysis of Littergram usage, combined with a series of regressions and ANOVAs, comparing Littergram usage in four periods: the pre-intervention period, the intervention (combining results for Groups 1 and 2), the post intervention period and a control equivalent to intervention from the previous year, i.e., 16 June to 4 August 2016. Additional econometric modelling techniques were used to estimate a possible impact of autocorrelation and seasonality in Littergram usage on results.

Usage trends for four intervention analysis periods

Data were divided into the following four analysis periods:

• Three months preceding intervention (referred to as ‘3 Months Pre’ in data analysis);

• Eight weeks of the intervention (referred to as ‘Intervention’ in data analysis);

• Three months post intervention (referred to as ‘3 Months Post’ in data analysis);

• A period equivalent to the 8 weeks of intervention for the previous year, i.e., intervention 16 June to 4 August 2016 (referred to as ‘2016 Intervention Equivalent’ in data analysis).

The periods were counted from the day the intervention started and ended for each group. Specifically, ‘three months preceding intervention’ means the period from 16 March to 15 June 2017 for Group 1; and the period from 31 March to 30 June 2017 for Group 2. ‘Three months post intervention’ refers to the period between 22 July and 21 October 2017 for Group 1; and to the period between 5 August and 4 November 2017 for Group 1. Finally, the ‘2016 intervention equivalent’ refers to 16 June to 21 July 2016 for Group 1; and 30 June to 4 August 2016 for Group 2 (see Table 4). Figure 2 shows the daily number of posts for the four analysis periods.

Figure 2. Daily number of Littergram posts for the different analysis periods.

Table 4. Littergram analysis periods

To estimate the intervention’s impact, we first looked at trends in Littergram usage during the different analysis periods, and then compared Littergram usage during these different time periods by conducting an ANOVA and appropriate post hoc tests to detect any significant differences.

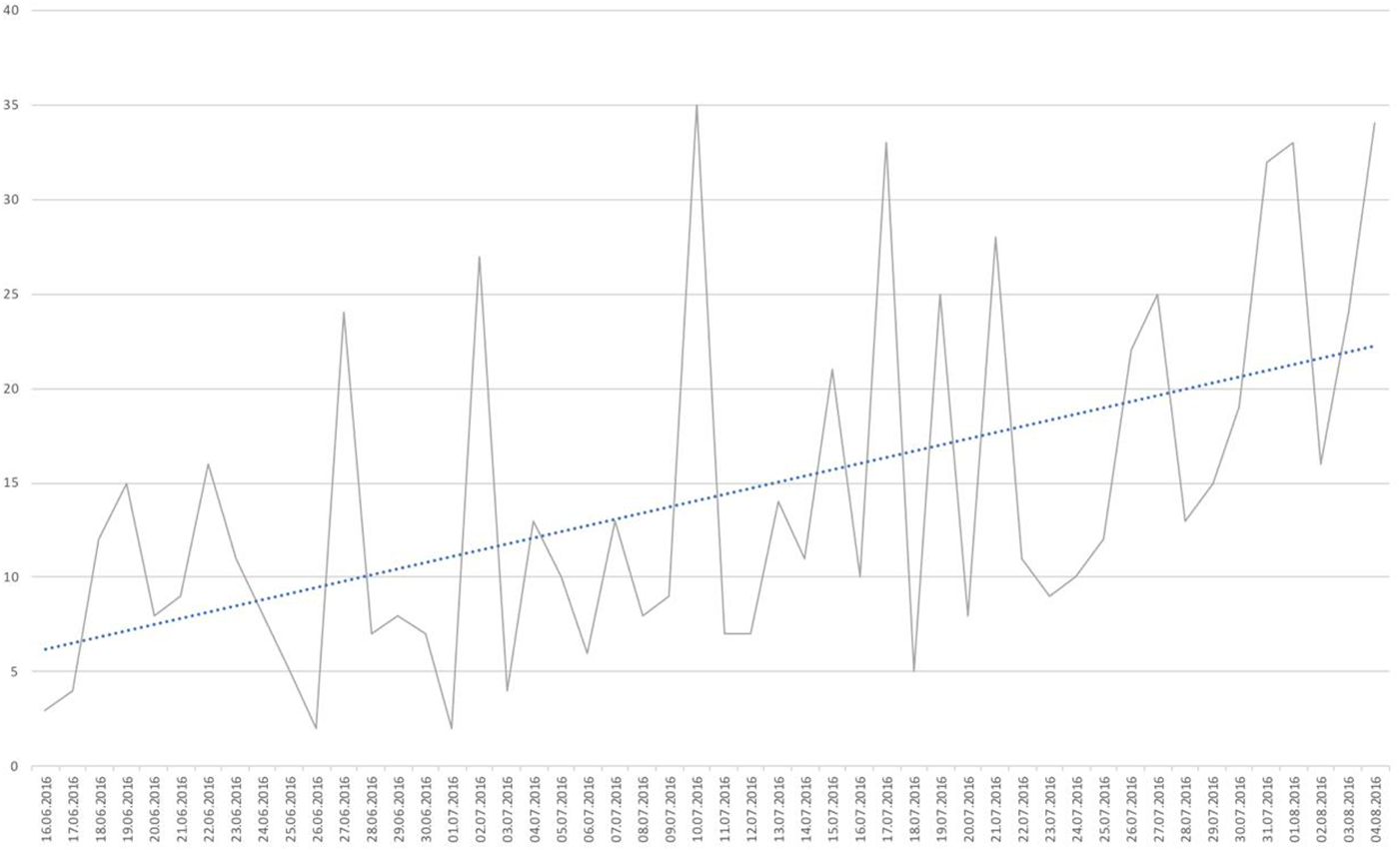

Intervention equivalent. Littergram usage grew during the 2016 intervention equivalent period, that is between June and August 2016. There was an average increase of 0.51 posts per day (Standardized β = 0.514; p = 0.000). A simple regression conducted to identify the trend showed the model fit the data well (F (1;47) = 16.849; p = 0.000) and it explained 25% of the variance (Adjusted R 2 = 0.248). This positive effect can be attributed to a growing size of user base, as the number of Littergram users increased from approximately 3500 to 4500 during the 8-week period. Figure 3 shows daily posts with a trendline for the 2016 intervention equivalent period.

Figure 3. 2016 Equivalent daily posts with a trendline.

Three months preceding the intervention

Littergram usage was stable during the 3 months preceding the intervention (standardized β = − 0.125; p = 0.234). A simple regression conducted to identify the trend showed the linear model did not fit the data (F(1;90) = 1.347; p = 0.234; adjusted R 2 = 0.005), indicating no change. This stability in usage was expected, as the user base stopped growing and was stable, at around 9500 users, throughout this period. Figure 4 shows daily posts with a trendline for the three months preceding the intervention.

Figure 4. Three months pre daily posts with a trendline.

Intervention period

Littergram usage grew during the intervention period and there was an average increase of 0.48 posts per day (Standardized β = 0.482; p = 0.001). A simple regression conducted to identify the trend showed the model fit the data well (F(1;46) = 13.901; p = 0.001) and it explained 22% of the variance (Adjusted R 2 = 0.215). This positive effect cannot be attributed to a growing user base as the number of users was stable. Figure 5 shows daily posts with a trendline for the intervention period.

Figure 5. Intervention daily posts with a trendline.

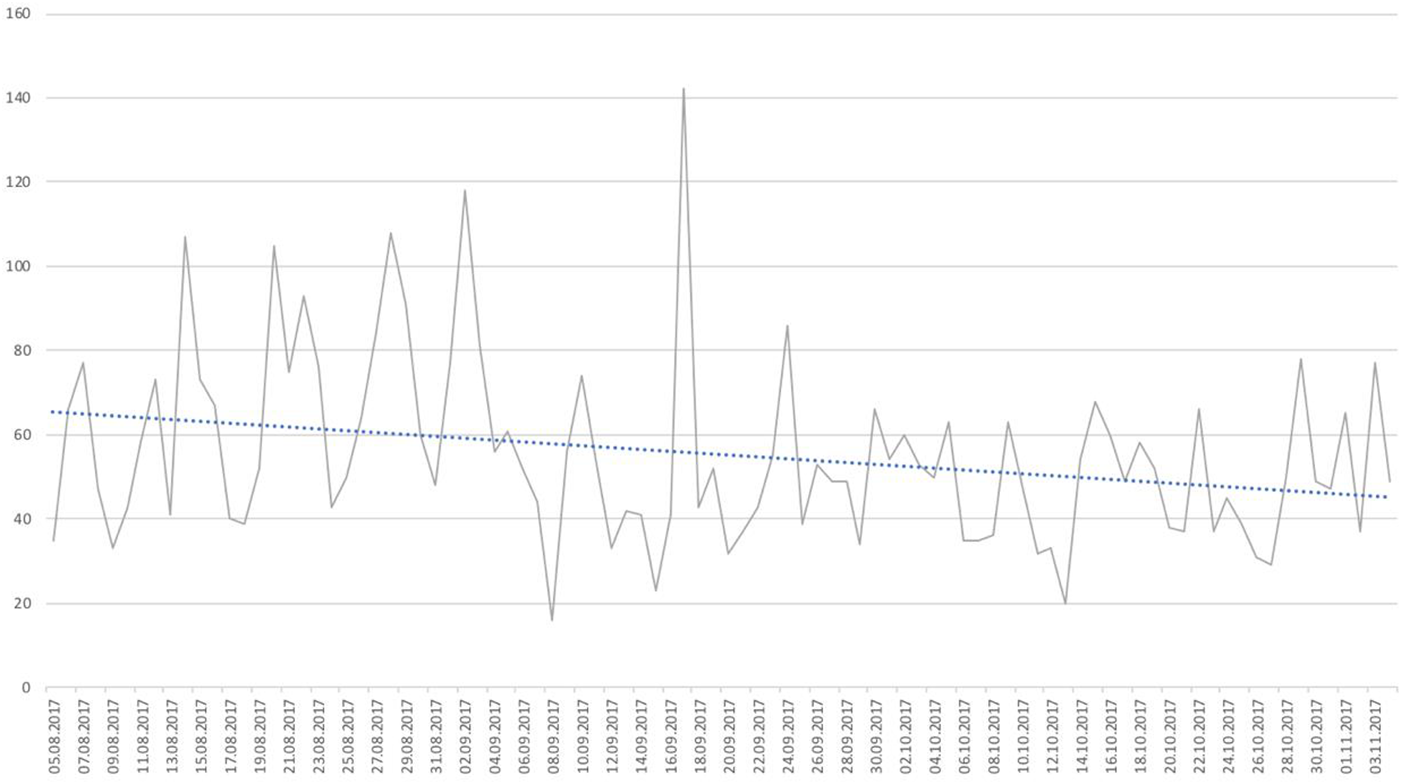

Three months after the intervention

Littergram usage decreased in the three months after the intervention, with an average decrease of 0.60 posts per day (standardized β = − 0.602.; p = 0.000). A simple regression conducted to identify the trend showed the model fit the data well (F (1;89) = 50.621; p = 0.000) and it explained 36% of the variance (Adjusted R 2 = 0.355). Figure 6 shows daily posts with a trendline for the three months after the intervention.

Figure 6. Three months post daily posts with a trendline.

Trend and usage summary

Overall, there were different trends in Littergram usage in the four analysis periods. Littergram grew in the year preceding the analysis period – the number of users increased and, subsequently, the number of pictures posted increased as well. However, in the period leading up to intervention, between March 2017 and the beginning of June 2017, the Littergram user base and usage stabilized and there was no significant difference in the number of pictures posted. The average number of posts per day for this period was 38.10 (SD = 14.23).

Most importantly, there was a 62% increase in the number of pictures posed during the intervention period, to an average of 61.25 (SD = 17.78) pictures per day. This was followed by a significant decrease, to an average of 43.75 (SD = 23.53), in the three months after the intervention. The subsequent decline to near pre-intervention levels upon its conclusion not only confirms the intervention’s positive effect but also suggests that the intervention, at least over the period studied, needs to be maintained to work. Figure 7 shows the average daily number of posts for the four analysis periods with 95% confidence intervals.

Figure 7. Average daily number of Littergram posts for the four analysis periods with 95% confidence intervals.

Intervention impact

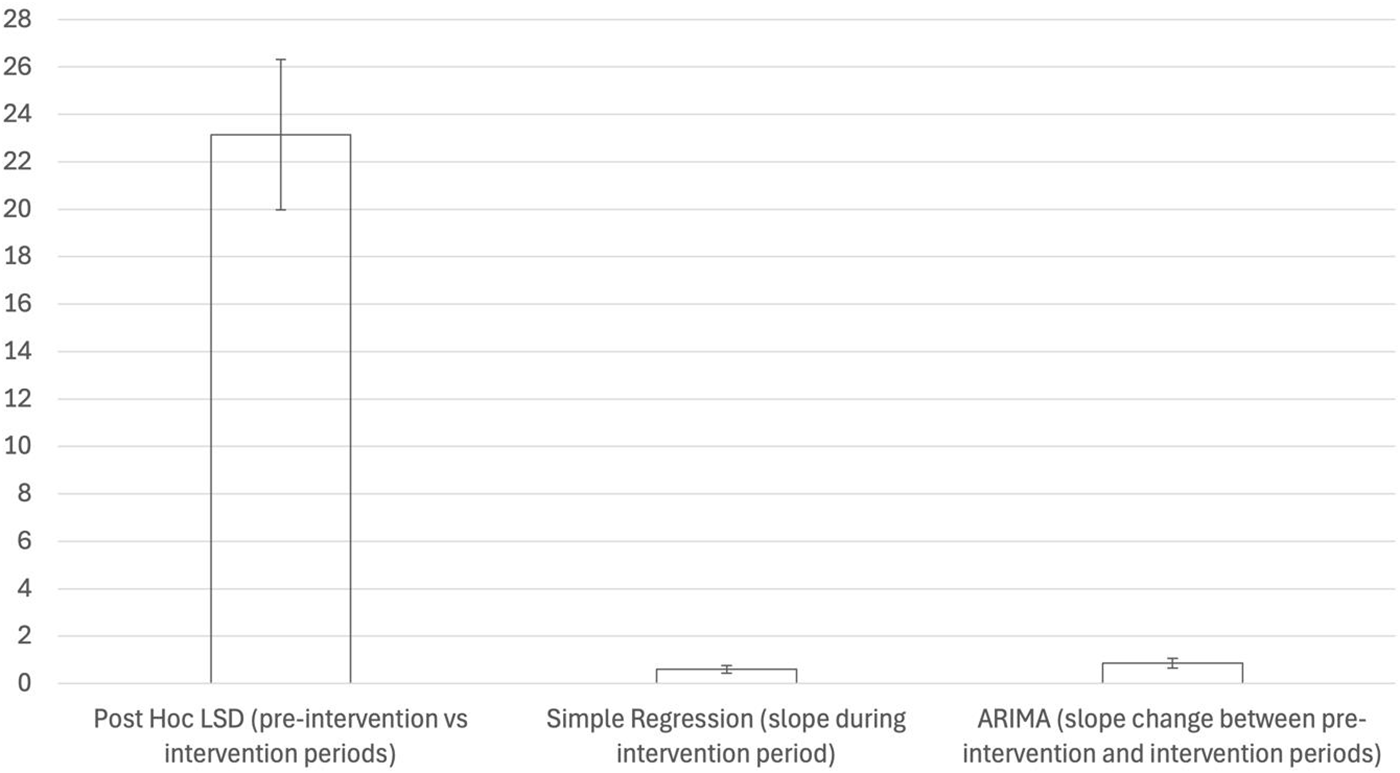

To evaluate whether the differences in usage between periods were significant, we conducted an ANOVA. There was a significant effect of analysis period on the number of pictures posted for the four conditions (F (3,276) = 58.546, p = 0.000). Fisher’s Least Significant Difference post hoc analysis showed significant differences between all analysis periods (see Tables 5 and 6). Specifically, the number of pictures posted during the intervention was significantly higher than in the three months preceding the intervention (Mean difference = 23.14; p = 0.000; 95% confidence intervals 16.91–29.38). The average number of posts during the intervention period was also higher than in the 3 months after the intervention (Mean difference = 17.50; p = 0.000; 95% confidence intervals 11.26–23.75). There was also a significant difference between the average number of pictures posted in the three months before the intervention compared to the 3 months after the intervention (Mean difference = −5.64; p = 0.033; 95% confidence intervals −10.82 to −0.46). None of these differences can be attributed to changes in the number of Littergram users, as userbase size was constant at the time.

Table 5. Comparing the average daily Littergram posts in the four analysis periods

Table 6. Post hoc LSD tests

Intervention effect remains robust after controlling for serial dependency and seasonality

In the final part of our analysis, we conducted Interrupted Time Series-Autoregressive Integrated Moving Average (ARIMA) modelling to control for serial dependency and seasonality in Littergram usage using the Box and Jenkins (Reference Box and Jenkins1976) approach. This type of modelling is recommended for aggregate time-series data as it can account for serial dependency such as when an individual’s usage on a given day is correlated with their usage on previous days. This can underestimate standard errors and misestimate p-values (Gujarati and Porter, Reference Gujarati and Porter2009) in the regression results shown in the Results section previously and ARIMA modelling can also help us disentangle seasonal changes in Littergram’s usage which can act as confounders – for example, it could be that the increase in posts during the intervention were seasonal, with more people using Littergram in early summer (mid-June to August, i.e., the intervention period) than in late spring (mid-March to mid-June, i.e., pre-intervention) and so we may overestimate the intervention’s effect.

After controlling for serial dependency, we still find a significant increase in posts during the intervention period. They were rising by 0.79 posts per day (p < 0.001; 95% confidence intervals 0.43 to 1.28) in comparison to a pre-intervention trend which was slightly negative at −0.07 posts per day. In the 3 months post intervention, we still find a significant decline of −1.48 posts per day (p = 0.000; 95% confidence intervals −2.09 to −0.85) in comparison to the intervention period. Figure 8 shows estimates of the intervention’s effect across the different models.

Figure 8. Estimates of the intervention’s effect across the different models.

Finally, we used the 2016 equivalent data to test for seasonality in Littergram’s posts. We found that there was no relative increase in daily posts during the intervention equivalent in comparison to the pre-intervention equivalent. This shows that the increase in usage during the intervention was not a seasonal occurrence but rather an effect of the intervention itself. These results re-affirm our conclusions regarding the success of the intervention. More details regarding the construction of these models are provided in Appendix B.

Heterogeneity analysis

Time-based heterogeneity

To explore how the intervention’s effect varied over time, we conducted ARIMA regression analysis before and after the midpoint of the intervention period (25 days). Daily posts were growing at 1.187 posts per day in the first 25 days of the intervention, and by 1.192 posts per day in the next 25 days of the intervention (both significant at the 1% level). Thus, we find no significant difference in the intervention’s effect in the first and second halves of the intervention period. This suggests that the intervention’s effect was relatively stable over the entire intervention period.

User-characteristic-based heterogeneity

Unfortunately, we did not have data on user demographics or geographical location to explore the heterogeneity of the intervention’s effect across these factors. Nonetheless, heterogeneity analysis based on past usage was successfully conducted using ARIMA regression analysis. Here, the sample was divided into two groups: (1) Those who were completely inactive over the preceding 90 days of the intervention period (Inactive Users) and (2) Those who had posted at least once in the preceding 90 days of the intervention period (Active Users).

We found that posts increased by 0.072 per day for Inactive Users during the intervention period; however, this increase is not statistically significant. In contrast, posts increased by 0.72 per day for Active Users during the intervention period, and this result is statistically significant at the 1% level. These findings suggest that Active Users were primarily driving the intervention effect and that the intervention was not effective for Inactive Users. This is very informative with regard to the underlying driver of the intervention’s effect, and its limitations in motivating Inactive Users. Tables with results from the heterogeneity analysis are provided in Appendix C.

Discussion

In this paper, we undertook to evaluate the effectiveness of the BCW framework in designing effective behaviour change interventions. We did this in the context of environmental decision-making and especially littering. We further aimed to showcase if and how one could use a common and practical communication channel such as email newsletters to deliver behaviour change interventions and to encourage people to behave more pro-socially.

The intervention was successful. There was a significant rise in the average daily number of pictures posted on the app during the intervention period (61.25 per day), compared to app usage in the 3 months preceding the intervention (38.10 per day) and the three months after the intervention ended (43.75 per day). This change cannot be explained by seasonal patterns or changes in userbase size

Our findings corroborate previous research indicating that the effectiveness of digital interventions is enhanced when they are grounded in systematic theoretical frameworks and incorporate a diverse array of BCTs (Albarracín et al., Reference Albarracín, Gillette, Earl, Glasman, Durantini and Ho2005; Noar and Zimmerman, Reference Noar and Zimmerman2005; Abraham et al., Reference Abraham, Kelly, West and Michie2009; Webb et al., Reference Webb, Joseph, Yardley and Michie2010). The success of our intervention, which utilized the comprehensive BCW framework, underscores the value of theory-driven approaches in designing effective behaviour change strategies. We suggest that when a body of findings reaches sufficient consistency that it permits a theory – essentially a causal model of the world – to be constructed, it is likely that the theory then reflects something which is likely to be observed repeatedly. Intuitive approaches are more likely to be influenced by salient isolated results, and to lack a coherent analysis of causality.

While most of the theoretically grounded interventions that proved successful relied on a single theory (Parrott et al., Reference Parrott, Tennant, Olejnik and Poudevigne2008; Trockel et al., Reference Trockel, Manber, Chang, Thurston and Tailor2011; Kothe et al., Reference Kothe, Mullan and Butow2012; Rompotis et al., Reference Rompotis, Grove and Byrne2014; Blake et al., Reference Blake, Suggs, Coman, Aguirre and Batt2017), our study further distinguishes itself by employing a comprehensive framework rooted in 33 empirically validated theories, as facilitated by the expansive scope of the BCW framework. Furthermore, our research provides additional support for prior results with regards to the effectiveness of the BCW, such as Allison et al.’s (Reference Allison, Purkiss, Lorencatto, Miodownik and Michie2022) work on the disposal of compostable plastics and Kolodko et al.’s (Reference Kolodko, Schmidtke, Read and Vlaev2021) work encouraging anti-littering messages on social media platforms. Given our intervention’s use of email communication, we believe that our findings are transferable to digital communication in a broader sense.

Interestingly, the positive change in behaviour we achieved occurred despite the fact that the TDF diagnosis, on which we based our intervention, measured the intention to litter or not as the dependent variable rather than the actual behaviour of littering itself. This brings up question for future research: was the intervention effective because it relied on the BCW and the specific BCTs it suggested, or did the emails merely serve as reminders to use the app more, irrespective of their content. To address this, a control group should be added to the next intervention, which would receive ‘dummy’ emails (with content not based on BCW) or emails that use an intervention that is not based on the TDF diagnosis.

While there was a significant increase in usage in the three months after the intervention compared to the 3 months preceding the intervention (43.75 vs. 38.10 posts per day on average, respectively), looking at the negative trend in usage after the intervention was stopped one can assume that with time usage decreased to its pre-intervention level. The findings indicate sustaining long-term impact of email messaging may necessitate prolonged intervention delivery. Therefore, future investigations should ascertain the optimal ‘dose’ of this treatment, considering factors such as duration and frequency. Unlike in many other contexts, where an intervention is costly to deliver and can only be sustained over a limited length of time, newsletter-based interventions such as ours can be maintained long-term and on an ongoing basis and can also be designed flexibly and to incorporate multiple interventions. Furthermore, studies indicate that regular nudges and customized feedback play a crucial role in maintaining long-term engagement. Fogg (Reference Fogg2009), for example, highlights how timely reminders can effectively support behaviour change, especially when seamlessly integrated into users’ digital routines. In a similar vein, Michie et al. (Reference Michie, van Stralen and West2017) found that interventions featuring consistent feedback, goal-setting, and incentives were successful in sustaining user involvement over time. Drawing from these findings, future iterations of our intervention could incorporate personalized reminders and feedback at regular intervals to re-engage users. This strategy is in line with Gardner et al. (Reference Gardner, Lally and Wardle2012), who emphasize the value of reinforcement techniques – particularly brief advice on behaviour change – to establish habits, helping users incorporate pro-environmental actions into their daily lives. By implementing these periodic reinforcement tactics, similar interventions could achieve more lasting behaviour change, extending the impact well beyond the initial engagement phase.

The fact that no sustained change was observed could also be due to the way our target behaviour was operationalized. While the target behaviour in this study was defined in a way that did not assume a long-term effect (it was ‘to post on Littergram at least three times a week’ and not ‘to post at least three times per week for a year’), one could argue that a good intervention would yield a sustained change. It is also possible that, designing the intervention based on a diagnosis that used actual behaviour rather than intent to behave, the identified barriers/enablers would better tap into Littergram users’ motivation and would have resulted in a sustained change.

While this study demonstrated increased engagement with the Littergram app, it did not directly measure whether this engagement translates into a tangible reduction in littering. This limitation reflects a broader challenge in digital intervention research: connecting digital engagement with actual behaviour change in the real world. Future research should explore methodologies to bridge this gap, such as incorporating observational assessments or surveys to track litter levels in areas with high app usage. Additionally, longitudinal studies could investigate whether sustained engagement with the app correlates with long-term reductions in littering behaviours. By examining these connections, future studies could provide valuable insights into the effectiveness of digital tools like Littergram in fostering real-world environmental impact, enhancing our understanding of how digital and physical interventions can work together to drive meaningful change.

When it comes to further exploring intervention intensity, we should note that during this intervention, 1561 people, that is 17.19% of the user base, unsubscribed from receiving Littergram newsletters. This could be interpreted as a good thing from a marketing point of view because it ‘cleared’ the userbase of people who were not interested in Littergram activity, news and updates. However, it could equally be that this was a negative consequence of the intervention, as it significantly reduced the number of people Littergram could regularly be in contact with. Figure 9 shows the total number of unsubscribes as the intervention progressed.

Figure 9. Change in the total number of unsubscribes as the intervention progressed.

The study also considers the potential for backfire effects, particularly given the observed unsubscribe rate from the intervention emails. On the positive side, heightened app engagement from highly interested individuals could serve as a catalyst for users to adopt additional pro-environmental behaviours, such as joining community clean-up events, advocating for local environmental policies, or even encouraging others in their networks to take action against littering. This kind of spillover effect is a valuable aspect of digital interventions like Littergram, as they can establish environmental awareness as a norm, potentially leading to broader behaviour changes. By fostering a sense of community and shared responsibility, digital tools can encourage users to extend their engagement beyond the app and integrate pro-environmental actions into their daily lives.

On the other hand, overexposure may lead to fatigue or annoyance, reducing their overall engagement with the campaign or some users may develop negative attitudes towards the campaign or become less engaged with environmental actions due to overexposure to communication (the unsubscribe rate 17.19% from the intervention emails signals the possibility of such backfire effects).

We were able to identify how the different types of emails contributed to the number of unsubscribers. Figure 10 shows two email types: (1) Progress, i.e., the number of times the person posted on Littergram in the last 7 days and (2) Did not post, i.e., how not posting on Littergram over the last 7 days made the person feel contributed to a significant majority – 69% – of the number of unsubscribers from the emails. This suggests that negative feedback (related to not posting and lack of progress) had possible backfiring effects during the intervention. These findings should caution policymakers from using similar BCTs when promoting pro-environmental behaviours.

Figure 10. Contribution of the different email types to the total number of unsubscribers from the emails.

Another possible explanation for the high unsubscribe rate is that the intervention was overly intensive – too many emails were sent in a relatively short period of time. While the number and frequency of emails, as well as the overall duration of the intervention, were agreed with Littergram, data suggests this may have been the case. Available data did not allow us to conduct analyses that would explore this issue, one reason being that emails sent in the 4 weeks when both groups underwent the intervention were aggregated. This meant that we were not able to verify precisely how many people unsubscribed after receiving each additional email. It would be useful to conduct even such a simple analysis, to verify whether there was a visible increase in unsubscribe rates after an nth email. If so, a subsequent intervention could be modified accordingly. Ideally, such analyses should be done on an ongoing basis, with the aim of finding an optimal frequency at which emails should be sent, yielding the highest number of posts and the lowest number of unsubscribes.

Additionally, periodic feedback surveys could help monitor user sentiment, allowing for adjustments to communication strategies in real-time. Finally, integrating AI-driven personalization could significantly optimize user engagement in future iterations. AI can help analyse user behaviour patterns, preferences and engagement levels, allowing for highly tailored communication strategies. For example, adaptive AI algorithms could adjust message frequency, content and tone based on individual user responses, potentially reducing unsubscribe rates by preventing overcommunication or irrelevant content. By carefully managing these aspects, future research can optimize digital interventions to maximize spillover benefits while minimizing the potential for backfire effects, ensuring that increased engagement contributes positively to both individual behaviour and broader environmental goals.

Conclusions

This project was one of the first to apply a comprehensive and systematic behaviour change theory, the BCW, on issues related to littering, and to report significant results of such an intervention. We were able to increase Littergram usage by 61% and by doing so to generate social impact in an important domain that is the problem of littering in the United Kingdom.

An additional contribution of this work is the design of a methodology of how to apply behaviour change theory to email communication. Since emails and newsletters (and social media more generally) are such a ubiquitous and easy-to-use tool (channel), this method can help to easily apply behavioural science to address not only the problem of litter but also other environmental challenges by diverse public and private institutions and on a mass scale. Additionally, such an approach could result in resources being used more wisely. Each digital communication (message) could be carefully designed, resulting in less unnecessary, unimpactful messages being created, sent and received. Such a methodical approach puts quality over quantity and could, over time, lead to a reduction in the amount of noise generated and spam we all receive into our inboxes and onto our screens every day.

Our study therefore demonstrates the potential of digital interventions in promoting pro-environmental behaviours. As cities increasingly adopt smart technologies, there’s an opportunity to integrate digital behaviour change strategies into urban management systems – similar, but naturally more advanced, than our own intervention. Future research could explore how such interventions might be incorporated into smart city initiatives, potentially linking littering behaviour with other aspects of urban environmental management.

While our study utilized the BCW, other theoretical frameworks have also been applied to waste-related behaviours. For example, Wang and Lin (Reference Wang and Lin2023) explored the role of social marketing strategies and communication design in influencing Chinese households’ waste-sorting intentions and behaviour through the Theory of Planned Behaviour framework. Future research could explore how different theoretical approaches might complement each other in addressing urban environmental challenges.

This study aligns with a wide range of research on pro-environmental behaviour in urban contexts. To provide a recent example, Drosinou et al. (Reference Drosinou, Palomäki, Kunnari, Koverola, Jokela and Laakasuo2023) found that environmental awareness significantly influences pro-environmental behaviour among individuals, underscoring the importance of educational and awareness-raising components in interventions like ours. One important function of Littergram and apps like it is the educational one, increasing awareness both of the user and of the audience – those viewing Littergram messages at home or even the City council members receiving prompts to action.

Supplementary material

To view supplementary material for this article, please visit https://doi.org/10.1017/bpp.2025.4.

Funding statement

This work was supported by the National Recovery and Resilience Plan of the Republic of Bulgaria under contract SUMMIT BG-RRP-2.004-0008-C01.