Introduction

Statistical power is the probability to detect the effect one is looking for, given the effect exists. Hence, sufficient statistical power is a key criterion for studies based on inference statistics. Considering the convention for statistical power (preferably >80%), the fields of psychology and neuroscience suffer from a considerable lack of adequately powered studies (Szucs & Ioannidis, Reference Szucs and Ioannidis2017). For mental health, a comprehensive review of clinical trials registered in ClinicalTrials.gov (N = 96 346) revealed a modest median sample size of 61 patients per study (Califf et al., Reference Califf, Zarin, Kramer, Sherman, Aberle and Tasneem2012) which restricts sensitivity to detect treatment effects and impedes many relevant analyses (e.g. moderate between-group effect sizes caused by desirable active control group designs, or moderator analyses). It is therefore important to understand the process and influencing factors of sample planning in clinical research.

According to Altman and Simera's history of the evolution of guidelines, critically small sample sizes were mentioned as early as in the first part of the twentieth century (Altman & Simera, Reference Altman and Simera2016). Over time, increasing awareness about the importance of sample size and sample size planning (SSP) has led to the development of recommendations for SSP (cf. Appelbaum et al., Reference Appelbaum, Cooper, Kline, Mayo-Wilson, Nezu and Rao2018). For randomized controlled trials (RCTs), the CONSORT 2010 guidelines (Moher et al., Reference Moher, Hopewell, Schulz, Montori, Gøtzsche, Devereaux and Altman2012) include the following statement:

Authors should indicate how the sample size was determined. […] Authors should identify the primary outcome on which the calculation was based […], all the quantities used in the calculation, and the resulting target sample size […]. It is preferable to quote the expected result in the control group and the difference between the groups one would not like to overlook. […] Details should be given of any allowance made for attrition or non-compliance during the study.

These recommendations reassemble the most important statistical SSP determinants for RCTs. Box 1 features the relevant parameters for comprehensible SSP, which aims at providing the reader with all necessary information to assess the full process of sample size determination. From a mathematical perspective, those determinants suffice to estimate the required sample size of a given trial. Sample requirements will be higher with a lower α level and with a higher β level (study power). The smaller the expected treatment effect (e.g. using an active control condition) and the correlation among repeated measures (e.g. caused by a therapeutic change or long time intervals), the higher is the required sample size. Equally, more study conditions and higher dropout will increase demand for participants.

Box 1. Statistical sample size determinants for randomized clinical trials.

Besides those statistical determinants that are based on study design, however, it is easy to imagine that study context influences the SSP process. Relevant factors typically relate to the practical feasibility of a study. For example, funding has repeatedly been shown to impact SSP parameters, such as sample size or reported effect sizes in medicine and psychiatry (Falk Delgado & Falk Delgado, Reference Falk Delgado and Falk Delgado2017; Kelly et al., Reference Kelly, Cohen, Semple, Bialer, Lau, Bodenheimer and Galynker2006). Additionally, the ease of access to patient populations and sufficient resources constitute important factors (Dattalo, Reference Dattalo2008). At this, efficacy trials are frequently conducted as pilot studies, while effectiveness trials usually reflect later stages of research in routine care. As the last example, the provision of treatment in psychiatric care is costly, as many interventions are resource-intense and exhibit limited scalability (e.g. psychological treatment). In this regard, Internet interventions are increasingly recognized as a useful vehicle in mental health research (Domhardt, Cuijpers, Ebert, & Baumeister, Reference Domhardt, Cuijpers, Ebert and Baumeister2021), and their efficient application leads to expectably larger sample sizes (Andersson, Reference Andersson2018).

Considering this situation, SSP at times appears to be in a dilemma. On one side, statistical inference requires sufficiently large sample sizes to produce reliable outcomes. On the other side, practical restrictions, such as limited patient access, and financial resources constrain study designs. Even though some researchers argue that statistically underpowered studies do constitute a negligible problem, because individual findings could, anyways, be accumulated into meta-analytic evidence, other groups criticize this view due to the risk of introducing bias (e.g. file drawer problem, or biased estimates of treatment effects), in case a relevant proportion of studies do not find their way into the analysis (Califf et al., Reference Califf, Zarin, Kramer, Sherman, Aberle and Tasneem2012; Tackett, Brandes, King, & Markon, Reference Tackett, Brandes, King and Markon2019; Wampold et al., Reference Wampold, Flückiger, Del Re, Yulish, Frost, Pace and Hilsenroth2017). To mitigate the risk of accumulating bias, the CONSORT guideline stresses the importance of unbiased, properly reported studies that need to be published irrespective of their results (Moher et al., Reference Moher, Hopewell, Schulz, Montori, Gøtzsche, Devereaux and Altman2012). Trial pre-registration and registered reports can be seen as an increasingly recognized strategy towards such scholarly reporting practices.

Taken together, the topic of SSP (and achieved sample size) has been discussed for several decades. Previous studies have shown that current psychological and psychiatric research still suffers from low sample sizes, which can influence meta-analytic evidence or the knowledge gain from individual trials. It is therefore important to understand the factors that influence how researchers plan their sample sizes.

The present study investigated the extent to which guideline recommendations were implemented into SSP for RCTs on interventions for depression. Depression was chosen as it is one of the most relevant common mental health disorders to date, and, therefore, also constitutes a frequently investigated disorder. On a descriptive level, the provision of SSP is presented together with pre-registered and achieved sample size. In a second step, study design (e.g. number of study arms and number of repeated measures) was tested together with study context factors (e.g. funding, routine setting) to quantify their influence on actually achieved sample size. More explicitly, we were interested in the following questions: What information about SSP do studies provide? Do studies attain their pre-registered sample size? To which proportion does study design influence sample size? To which proportion does study context influence sample size? To support efforts of adequate SSP, recommendations are provided in Appendix 1.

Methods

Literature selection

We selected studies from a current meta-analysis that investigated the effects of psychological treatment for adult major depression (Cuijpers, Karyotaki, Reijnders, & Ebert, Reference Cuijpers, Karyotaki, Reijnders and Ebert2019). In this study, a database of randomized trials from 1966 until 2017 provided the primary literature. This database has been described in a methods paper earlier (Cuijpers, van Straten, Warmerdam, & Andersson, Reference Cuijpers, van Straten, Warmerdam and Andersson2008). In short, the database draws on the bibliographical databases PsycINFO, PubMed, Embase, and Cochrane Central Register of Controlled Trials and is being updated every year. Since the present study focuses on current SSP practices, we only included studies between 2013 and 2017.

Quality assessment and data extraction

We relied on quality ratings provided in the principal study. This previous quality assessment was based on four selected criteria of the Cochrane risk of bias assessment tool (Higgins et al., Reference Higgins, Altman, Gotzsche, Juni, Moher and Oxman2011). The applied criteria included the following: generation of allocation sequence, allocation concealment, masking of assessors, and handling of incomplete outcome data (e.g. intention-to-treat analyses). Principal ratings were conducted by two independent researchers, who solved eventual disagreements by discussion.

For the present study, data extraction was protocol-based (structured guide of 24 items) and was conducted in dyads by RS, TK, YT, EM, and two research assistants. The protocol entailed general items (e.g. publication year, achieved sample size, and calculated sample size), as well as basic SSP variables (number of study groups, type of control group, and number of repeated measurements), and several items for comprehensible SSP (e.g. type of statistical test, effect size, the justification for effect size, correlation of repeated measures, or expected dropout). We decided to analyze studies between 2013 and 2017, as our priority was to provide information on the current conduct of SSP. Assessment of planned sample size was based on the first available entry of the pre-registration history. The type of control group was coded as ‘passive CG’ whenever the study did not include any active comparator.

Data analysis

Data were analyzed using descriptive and inferential statistics. Descriptive statistics were used to depict the frequency and quality of SSP in current depression trials. Whenever applicable, bias-corrected and accelerated (BCa) 95% confidence intervals (CIs) were calculated. The three dependent interval variables ‘achieved sample size,’ ‘calculated sample size,’ and ‘pre-registered sample size’ were positively skewed, and, therefore, transformed logarithmically (cf. Appendix 1). All further requirements for t tests and linear regression were checked before analysis. Non-parametric tests were applied whenever requirements for parametric analysis were violated.

Multiple regression was used to predict the dependent variable ‘achieved sample size.’ The sensitivity of regression analyses (deviation from zero in a fixed model) was calculated using G*Power (Faul, Erdfelder, Buchner, & Lang, Reference Faul, Erdfelder, Buchner and Lang2009). For the model incorporating the three basic SSP variables (number of conditions, type of control group, number of repeated measures between pre- and post-assessment) in k = 78 studies with 80% power, sensitivity was f 2 = 0.145 (R 2 = 0.11, or 11% of variance). The full regression model incorporated four additional context variables: treatment modality (face-to-face/online), setting (effectiveness/efficacy), funding (yes/no), and pre-registration (yes/no). This model resulted in a sensitivity of f 2 = 0.211 (R 2 = 0.16, or 16% of variance). For the group of studies that included sample size calculation (k = 44), a second regression was carried out. This statistical model resulted in a sensitivity of f 2 = 0.273 (R 2 = 0.21, or 21% of variance) for the three SSP predictors and f 2 = 0.393 (R 2 = 0.28, or 28% of variance) for the full model.

Selection of included studies

The present study is based on selected studies from a previous meta-analysis investigating the effects of psychological treatments for depression (Cuijpers et al., Reference Cuijpers, van Straten, Warmerdam and Andersson2008) in a sample of k = 289 clinical trials. All studies of the principal meta-analysis that had been published between 2013 and 2017 were included in the analysis, resulting in a sample of k = 89 primary studies. Of those studies, a proportion of k = 11 studies (14%) was excluded. Reasons for exclusion from the analysis were as follows: not published in a peer-reviewed journal (one article), peer-to-peer treatment (two articles), depression not the primary psychiatric outcome (two articles), subclinical sample (two articles), prevention in a student sample (one article), letter to the editor (one article), actually published before 2013 (one article), and analysis limited to descriptive statistics only (one article).

Results

Characteristics of included studies

Table 1 presents the characteristics of included studies and Fig. 1 depicts the relationship between study design and achieved sample size. Of the analyzed studies, 70.0% implemented intention-to-treat analysis, 15.5% implemented per-protocol analysis, and 8.6% implemented both, resulting in 5.9% unspecified analysis. The following statistical test(s) were applied during principal analysis: linear mixed models (39.6%), analysis of covariance (ANCOVA) (31%), analysis of variance (ANOVA) (13.8), regression (10.4%), χ2 test (12.1%), and t test (19.0%).

Fig. 1. Achieved sample size and its (missing) relation to study design. Conversely, sample sizes of Internet interventions exceed those of face-to-face therapy by around 80%, which underlines the relevancy of digital psychiatry to address the issue of low statistical power in clinical research.

Table 1. Characteristics of included studies

Analysis of SSP determinants

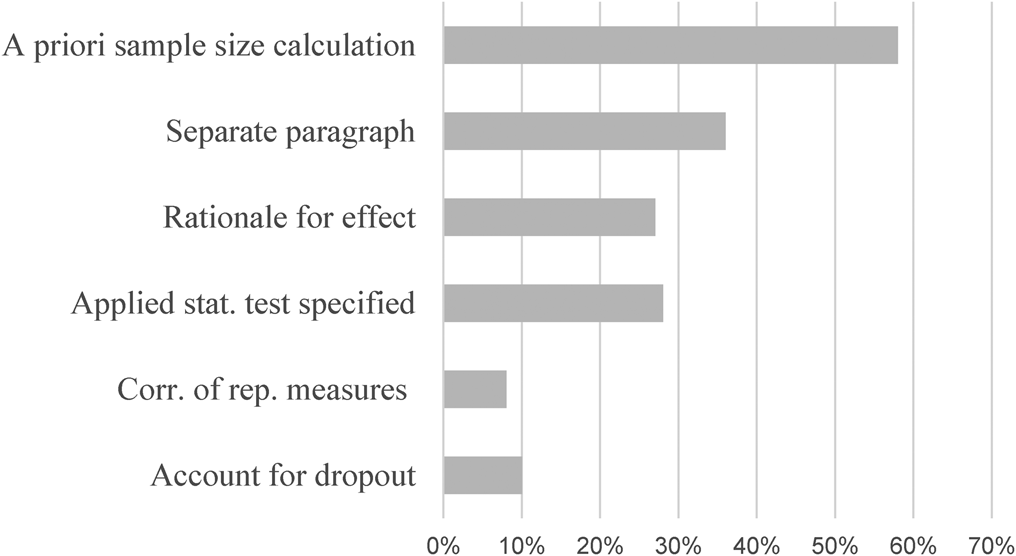

Most studies followed the significant convention of α = 5% together with power = 80%. On average, a treatment effect of d = 0.52 (s.d. = 0.17; CI: 0.46–0.59) was implemented, which did not differ by type of control group (x 2(1,23) = 1.26; p = 0.205), nor setting (x 2(1,23) = 0.525; p = 0.600). Expected treatment effects were not normally distributed, but instead exhibited clear kurtosis around d = 0.5 (64% + −0.1; cf. Appendix 1). The remaining determinants for comprehensive SSP are presented in Table 2. Figure 2 depicts the proportions of studies providing information for comprehensible SSP.

Fig. 2. Provision of sample size determinants in current trials on depression; % = percent; k = number of studies. Note that only a small fraction of trials provide sufficient information for comprehensible SSP. About one-third provides information on basic SSP determinants.

Table 2. Determinants of comprehensive sample size planning

a Between pre- and post-assessment.

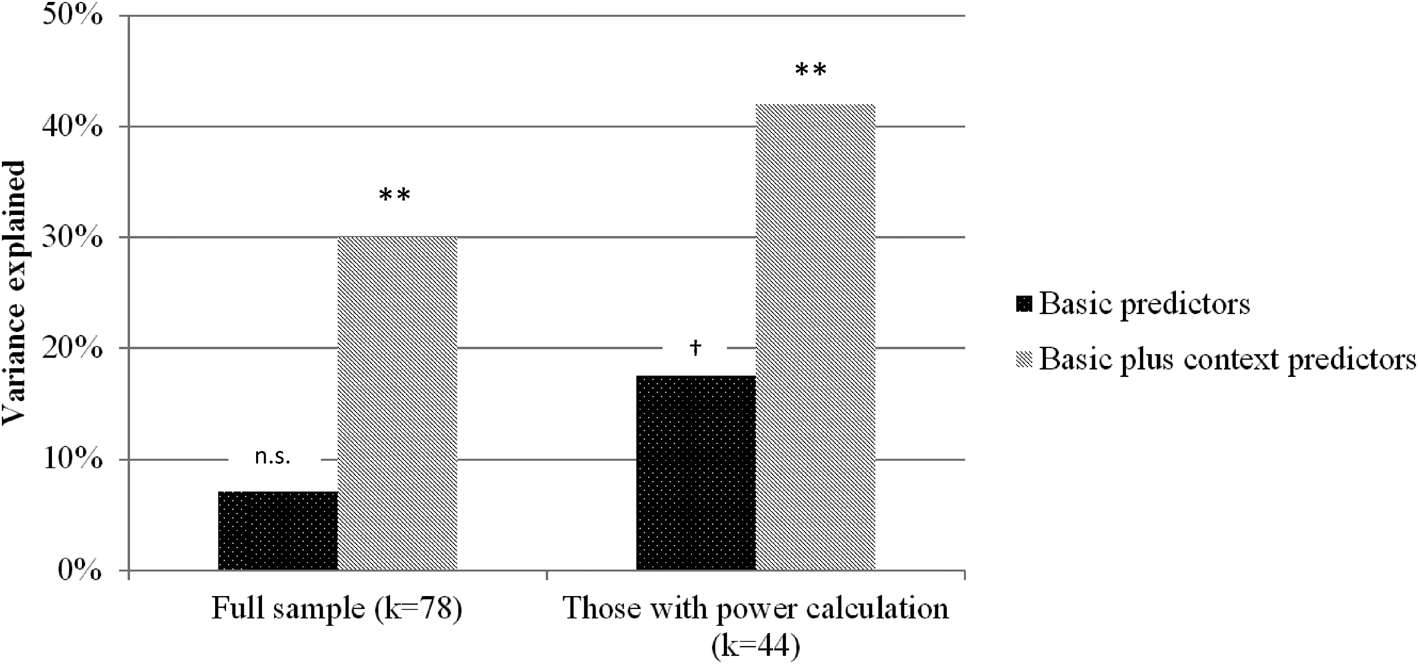

Effect of study design and study context on the sample size

This section quantified the extent to which the study design predicted achieved sample size. In two consecutive steps, three SSP determinants and four study context factors were implemented into a block multiple linear regression model to estimate their impact on sample size. Regression models were estimated for the full sample, as well as for studies featuring at least some form of SSP in their articles. For the full sample, the three basic predictors did not explain significantly more variance than the null model (R 2 = 0.07, F (3,72) = 1.79, p = 0.155). When context factors were added, the regression explained a significant proportion of variance (R 2 = 0.30, F (7,68) = 3.59, p = 0.002). A comparable pattern with higher proportions of explained variance emerged for those studies with SSP (Block 1: R 2 = 0.18, F (3,39) = 2.75, p = 0.055; Block 2: R 2 = 0.42, F (7,35) = 3.60, p = 0.005). Figure 3 depicts the proportions of explained variance for both regressions in the full sample and the SSP sample. Details on standardized regression coefficients are presented in Table 3. Furthermore, sample sizes in pre-registration corresponded to a very high extend with sample requirements during power calculation (r = 0.887, p < 0.001). An even higher correlation was found between sample size in power calculation and achieved sample size (r = 0.954, p < 0.001).

Fig. 3. Explained variance (of sample size) of three important SSP determinants, compared to a regression model implementing those predictors together with four study context variables (cf. Table 3); ** <0.01; † = 0.055; k = number of studies.

Table 3. Predictive value of study design (SSP determinants), and study design plus study context variables for the dependent variable achieved sample size

Note. k = number of studies.

Discussion

Aiming to investigate the conduct of SSP, this article analyzed 78 RCTs on psychological treatment for major depression. Besides providing information on pre-registered and achieved sample size, the article estimated the impact of study design and study context on sample size.

Principal findings indicate that the average RCT for depression includes around 100 patients. Furthermore, there was striking concordance between pre-registered, calculated, and achieved sample size, suggesting high adherence by researchers to their previously met decisions. Regarding sample size determination, however, <60% provided any information, around one-third provided a separate paragraph featuring some of the most important SSP determinants, and only one in ten studies provided full information for comprehensive SSP. The limited predictive value of study design for actual sample size was found in a regression estimating the impact of SSP determinants together with selected study context variables, with the latter leading to substantial increases in sample size.

With a median sample size of 106 patients per study, the achieved sample size was comparable to earlier findings. For example, a survey in response to recommendations of the APA Task Force on Statistical Inference investigated changes in sample size over the past 30 years. Findings revealed a median sample size of N = 107 for studies published in 2006 in the Journal of Abnormal Psychology (Marszalek, Barber, Kohlhart, & Cooper, Reference Marszalek, Barber, Kohlhart and Cooper2011). Since a significant increase in sample size only was observed from 1977 to 1996, the authors concluded that sample size remained rather constant over time—which also seems to apply to other types of clinical studies published in leading clinical psychology journals (Reardon, Smack, Herzhoff, & Tackett, Reference Reardon, Smack, Herzhoff and Tackett2019). Furthermore, a comprehensive review of studies registered in ClinicalTrials.gov found a medium sample size of only 61 participants (Califf et al., Reference Califf, Zarin, Kramer, Sherman, Aberle and Tasneem2012). Although this discrepancy appears considerable (43%), the high variance between studies suggests no meaningful difference to the present investigation. Findings, therefore, support the interpretation of moderate and rather stable sample sizes.

According to standard power calculation software (e.g. G*Power; Faul et al., Reference Faul, Erdfelder, Buchner and Lang2009), current RCTs for depression, therefore, are sufficiently powered to detect treatment effects of d = 0.5 using simple comparison (e.g. independent t test or between-group factor). Simultaneously, those numbers impede relevant analyses for the further advancement of the field, such as investigating therapy mechanisms or differential treatment effects. Additionally, many studies fail to provide a rationale for determining the expected treatment effect, while effects clearly differ as a function of study design (e.g. type of control group) and study context (e.g. efficacy v. effectiveness trial) (Cuijpers, van Straten, Bohlmeijer, Hollon, & Andersson, Reference Cuijpers, van Straten, Bohlmeijer, Hollon and Andersson2010; Kraemer, Mintz, Noda, Tinklenberg, & Yesavage, Reference Kraemer, Mintz, Noda, Tinklenberg and Yesavage2006). At this, the relevancy of study context is also being highlighted by a clear excess in the patient numbers for digital interventions compared to face-to-face treatment.

Study context appears reasonably impactful and can help explain the rather stable sample sizes. We estimated the proportions to which study context and study design (SSP determinants) influence sample size. Linear regression revealed that study context accounted for 81.1% of variance, leaving only 18.9% attributable to SSP determinants (cf. Fig. 2). This means that in the overall picture study context has been identified as a crucial factor in sample size determination. This proportion shifted to 58 and 42% of explained variance for those studies that featured SSP in their articles. Despite more balanced proportions in this subgroup, this pattern still underlines the relevancy of the study context even for RCTs featuring SSP. It, therefore, seems advisable to pay attention to restrictions arising from the study context. For example, more emphasis should be placed on intense assessment to increase statistical power, which we address later in this section. Another context factor concerns the distinction between efficacy and effectiveness studies. There exists solid evidence for higher treatment effects in efficacy trials (Cuijpers et al., Reference Cuijpers, van Straten, Bohlmeijer, Hollon and Andersson2010; Kraemer et al., Reference Kraemer, Mintz, Noda, Tinklenberg and Yesavage2006). However, many studies fail to mention this aspect when providing a rationale for their proposed treatment effect. Finally, some guidelines suggest to incorporate budget considerations into SSP (Bell, Reference Bell2018). Representing a limitation to our findings, the presented proportions should be interpreted as approximations depending on the specified regression model. Additionally, sample size limits the information about single determinants of the regression model, which is why we abstain from interpreting single predictors in the model.

Considering the rather stable trial numbers of the last decades, the clear excess in achieved sample size of digital interventions (around 80%) appears particularly meaningful. Almost all interventions were designed as guided Internet-based treatment, which has been found effective for many common mental health disorders, and which was on par with face-to-face treatment in a recent meta-analysis (Carlbring, Andersson, Cuijpers, Riper, & Hedman-Lagerlöf, Reference Carlbring, Andersson, Cuijpers, Riper and Hedman-Lagerlöf2018). Together with other advantages, such as standardized and efficient treatment provision, digital interventions can be regarded a statistically powerful vehicle in the toolbox of contemporary psychiatric research.

As a related aspect, the practical costs of automatized intense assessment are decreasing, as so-called blended interventions are increasingly being tested or implemented into psychiatric care (Kooistra et al., Reference Kooistra, Wiersma, Ruwaard, Neijenhuijs, Lokkerbol, van Oppen and Riper2019; Lutz, Rubel, Schwartz, Schilling, & Deisenhofer, Reference Lutz, Rubel, Schwartz, Schilling and Deisenhofer2019). In short, blended therapy can be regarded as computer-supported and app-supported face-to-face treatment, which has been tested for individual and group treatment of common mental health disorders (Erbe, Eichert, Riper, & Ebert, Reference Erbe, Eichert, Riper and Ebert2017; Schuster et al., Reference Schuster, Kalthoff, Walther, Köhldorfer, Partinger, Berger and Laireiter2019). The magnitude of expectable gains in statistical power due to automatized intense assessment is considerable and could help to mitigate the current situation without necessarily increasing sample sizes—that are frequently being limited by restricted resources. For example, a hypothetical RCT with point assessments of psychopathology (pre–post assessment by questionnaire) would require 90 patients, but 8 (bi-) weekly assessments during the active trial phase reduce this number to 42–50 patients. At this, short pre–post ecological momentary assessment (EMA) can offer further choices for study design (Schuster et al., Reference Schuster, Schreyer, Kaiser, Berger, Klein, Moritz and Trutschnig2020).

Regarding sample size in the context of prospective study planning, present data indicate that researchers adhered in a striking manner to the specifications made during trial pre-registration. This is reflected by very high correlations between planned, required, and achieved sample size. Additionally, many studies pre-registered their trial, which probably reflects a general trend towards pre-registration (Nosek & Lindsay, Reference Nosek and Lindsay2018; Scott, Rucklidge, & Mulder, Reference Scott, Rucklidge and Mulder2015). For RCTs in clinical psychology, lower rates have been reported until recently (Cybulski, Mayo-Wilson, & Grant, Reference Cybulski, Mayo-Wilson and Grant2016; Scott et al., Reference Scott, Rucklidge and Mulder2015), suggesting progress in the practice of prospective study registration.

For scholarly SSP (cf. CONSORT 2010 guidelines in Box 1), however, a good part of the road to rigor still lies ahead. Only one-third provided information on basic SSP determinants, and only one in ten studies provided sufficient information for comprehensible SSP. It, therefore, remains unclear, how pre-registered sample sizes and sample sizes in SSP were exactly determined. For example, effect sizes for SSP for the wider field of psychology usually follow a more ample distribution (Kenny & Judd, Reference Kenny and Judd2019; Kühberger, Fritz, & Scherndl, Reference Kühberger, Fritz and Scherndl2014), as one would expect from a complex situation. The present analysis, however, revealed a narrow peak of expected effect sizes exactly at d = 0.5 (cf. Appendix 1). Additionally, practically all studies with more than two trial arms (e.g. two active and one passive group) featured only one effect size for SSP. Furthermore, less than one-third provided a rationale for how the proposed effect size was determined. These findings fit Cohen's speculation that ‘low level of consciousness about effect size’ might contribute to the problem (Cohen, Reference Cohen1992; Maxwell, Reference Maxwell2004), and they also fit with a related phenomenon previously described as sample size samba (Schulz & Grimes, Reference Schulz and Grimes2005).

With regard to the limitations of the reported findings, the following considerations should be taken into account. The sample size of the studies under investigation was subject to wide variation, including small feasibility studies and large multicenter trials. In view of this fluctuation, more extensive meta-analyses could provide additional results. For example, it would have been interesting to investigate SSP in specific study clusters. Given the assumption that Internet interventions are less restricted by study context, it would also have been interesting to investigate SSP in this group more closely. Regarding the conducted regression analyses, it should be noted that the proportion of explainable variance depends on the variables included in the model. Here, central study design and study context variables were included, but other parameters could be added as well. Importantly, as the present sample was restricted to 78 studies, we tried to abstain from interpreting single predictor variables of the multiple regression due to the risk of fluctuation. Instead, statistical power is sufficient to interpret the reported blocks of study design and study context. Concerning the generalizability of the reported findings, it can be assumed that reported patterns are likely not restricted to depression research. At the same time, further investigations are needed to draw safe conclusions. For this purpose, revision of SSP practice of RCTs for other common mental health disorders would be advisable.

Conclusions

Although SSP is central to the planning of efficient trials, the majority of RCTs for the treatment of depression use no or limited SSP. While the case numbers of pre-registered studies have been achieved, the factors for calculating the required sample size remain unclear. The comparison of study design and study context showed a high relevance of study context, which probably is related to the rather stable trial numbers of the last decades. Here, the advancing developments in the field of digital psychiatry can provide feasible strategies (e.g. intense assessment and Internet-based treatment) to improve the situation.

Supplementary material

The supplementary material for this article can be found at https://doi.org/10.1017/S003329172100129X.

Financial support

No funding received.

Conflict of interest

The authors declare no competing financial interests or other conflicts.