1 Introduction

1.1 Models

Imagine that you’re a shipbuilder working with ocean liners like SS Monterey (Figure 1a). Matson Navigation Company has re-purchased the liner from the US government, to whom they had sold the ship following its utilisation during the Second World War. The company now deems the ship too slow for its San Francisco–Los Angeles–Honolulu run, and tasks you to redesign its engine so that it will be able to sail at a certain speed whilst carrying a certain load. What is the minimal power the engine must have to ensure it’s up to the task? You could of course just make a guess, install a certain engine, and see whether the ship runs at the right speed when it’s back in the water. If you’re lucky, the ship works as it is supposed to. But there’s a good chance it won’t, and that would be a costly failure. A better way to proceed is to construct a model of the ship, a scaled-down version of the real ship you’re overhauling, and perform experiments on that model. The model must be carefully constructed: it has to have a shape that reflects the shape of the full-sized ship you’re ultimately interested in. And the experiments that you perform on the model have to be carefully designed. In this case, you want to measure the complete resistance,

![]() , faced by the model ship as it is propelled through the basin at velocity

, faced by the model ship as it is propelled through the basin at velocity

![]() , because this gives you information about how powerful the engine needs to be. Experiments of this kind are standard practice in the process of designing ships. In Figure 1b, we see a model ship being moved through a towing tank.

, because this gives you information about how powerful the engine needs to be. Experiments of this kind are standard practice in the process of designing ships. In Figure 1b, we see a model ship being moved through a towing tank.

Figure 1a The target system: An ocean liner like SS Monterey

Figure 1b Model ship in towing tank

But however carefully you construct your model, and however carefully you perform experiments on it, you’re not investigating the model for its own sake. Ultimately, you want to use the results of your investigations to inform you of another system: SS Monterey at sea. So you have another task at hand: you have to translate your experimentally discovered facts about the model into claims about the full-sized ship. This translation procedure is subtle and complex: it is informed by our theoretical background knowledge about fluid mechanics, and clever ways of thinking about things like scale, length, and resistance. We’ll come back to these later in the Element. For now, what’s important is the general pattern of reasoning: the shipbuilder first constructs a scaled-down model of the ship, investigates how the model behaves, and then translates facts about their model into claims about the actual ship.

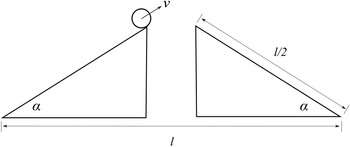

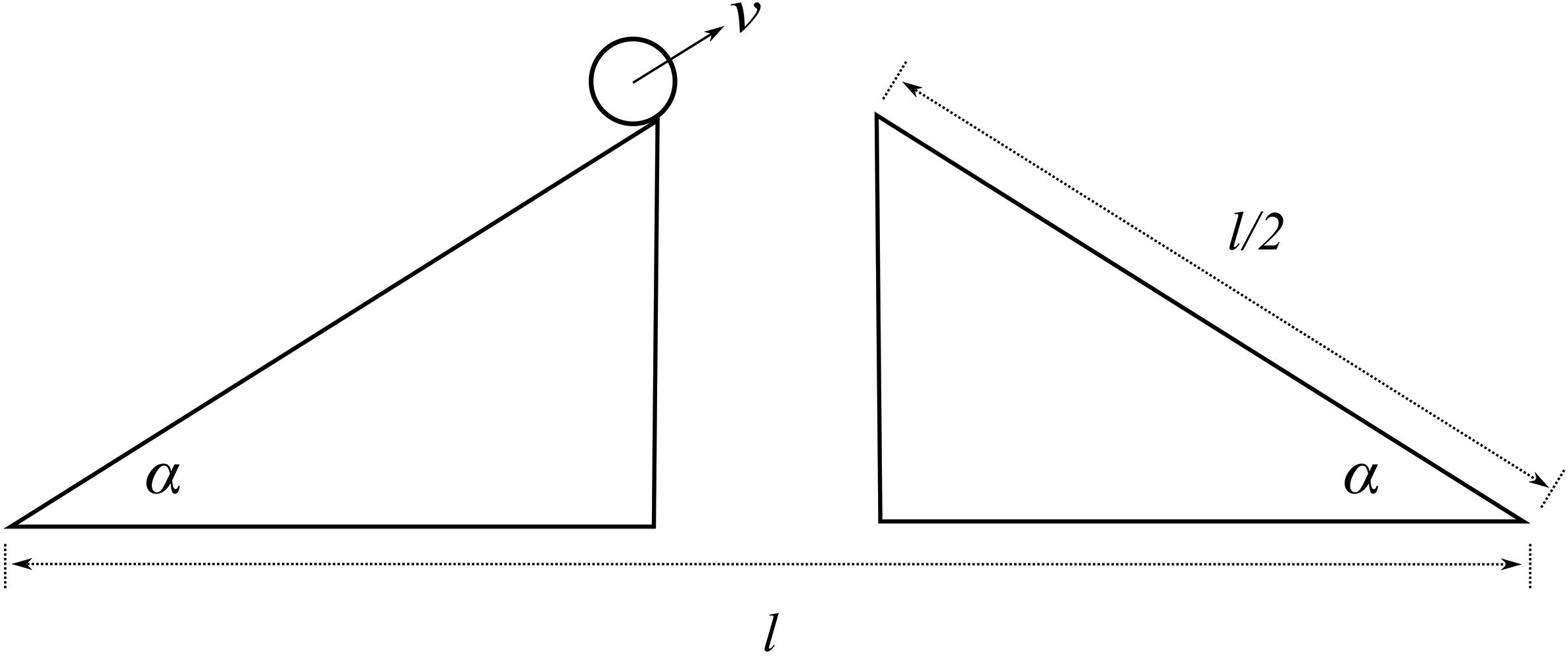

Now leave ships behind and imagine you’ve landed a job as a stunt planner for the next instalment of the 007 franchise. You are planning an exhilarating car chase through the streets of London, culminating in the British spy launching their Aston Martin across Tower Bridge.Footnote 2 The bridge is a drawbridge and the car is supposed to jump over the bridge when it’s half open, as displayed in Figure 2a. The producers tell you the angle,

![]() , at which they want the leaves (i.e. the arms) of the bridge for dramatic effect. How fast does 007 have to drive at the jump off point to ensure that the car lands safely on the other side?

, at which they want the leaves (i.e. the arms) of the bridge for dramatic effect. How fast does 007 have to drive at the jump off point to ensure that the car lands safely on the other side?

Figure 2a Tower Bridge half openFootnote 1

Unlike our shipbuilder, you don’t produce a scale model of the situation in which you make a small remote-controlled model car jump across a model bridge. Instead, you revert to the power of the imagination and the laws of Newtonian mechanics. You imagine a scenario with two inclined planes facing each other with a gap in the middle. You then imagine a perfect sphere moving up one of the planes with constant velocity

![]() . You imagine that both the planes and the sphere are on the Earth’s surface, that all other material objects in the universe have vanished, and that the planes and the sphere are in a vacuum. On the basis of these assumptions, the Earth’s gravity is the only force acting on the sphere; that is, the sphere is not subject to all the other forces that a real car jumping across a real bridge would experience, such as air resistance and the gravitational pull of other pieces of matter in the universe. This is your model of the bridge jump, where, of course, the two inclined planes stand for the two leaves of the bridge and the sphere for the car. The model is sketched in Figure 2b.

. You imagine that both the planes and the sphere are on the Earth’s surface, that all other material objects in the universe have vanished, and that the planes and the sphere are in a vacuum. On the basis of these assumptions, the Earth’s gravity is the only force acting on the sphere; that is, the sphere is not subject to all the other forces that a real car jumping across a real bridge would experience, such as air resistance and the gravitational pull of other pieces of matter in the universe. This is your model of the bridge jump, where, of course, the two inclined planes stand for the two leaves of the bridge and the sphere for the car. The model is sketched in Figure 2b.

Figure 2b Sketch of the bridge jump model

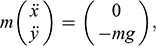

You now apply Newtonian mechanics to your model and find that the equation of motion for the sphere is:

(1)

(1)

where the

![]() is the horizontal, and

is the horizontal, and

![]() the vertical, coordinate of the ball;

the vertical, coordinate of the ball;

![]() is the gravitational constant on the surface of the Earth;

is the gravitational constant on the surface of the Earth;

![]() is the mass of the sphere; and the two dots on x and y indicate the second derivative with respect to time (and recall that this second derivative of a position is acceleration, and so

is the mass of the sphere; and the two dots on x and y indicate the second derivative with respect to time (and recall that this second derivative of a position is acceleration, and so

![]() and

and

![]() correspond, respectively, to the sphere’s acceleration in the horizontal and vertical directions). According to this equation, the sphere moves at a constant horizontal velocity, and accelerates towards the ground at a rate equal to mg. The general solution to this equation is:

correspond, respectively, to the sphere’s acceleration in the horizontal and vertical directions). According to this equation, the sphere moves at a constant horizontal velocity, and accelerates towards the ground at a rate equal to mg. The general solution to this equation is:

(2)

(2)

where

![]() and

and

![]() are the initial conditions (that is, the position of the sphere when it starts the jump);

are the initial conditions (that is, the position of the sphere when it starts the jump);

![]() and

and

![]() are the components of the sphere’s velocity in the x-direction and the y-direction, respectively; and

are the components of the sphere’s velocity in the x-direction and the y-direction, respectively; and

![]() is time. From this general solution, you can calculate the minimal velocity that the sphere must have to land on the other inclined plane without falling into the gap between them:

is time. From this general solution, you can calculate the minimal velocity that the sphere must have to land on the other inclined plane without falling into the gap between them:

(3)

(3)

Given the angle

![]() that the producers want for dramatic effect, and the length

that the producers want for dramatic effect, and the length

![]() of the bridge, this formula tells you the minimum velocity with which the sphere must move up the inclined plane to fly across the gap and land safely on the other side.

of the bridge, this formula tells you the minimum velocity with which the sphere must move up the inclined plane to fly across the gap and land safely on the other side.

But real cars (even Aston Martins) aren’t spherical and don’t move in a vacuum. And you’re not interested in spheres in vacuums per se. What you really want to know is the velocity at which the actual car has to move up the half open bridge to avoid plummeting into the Thames. So the question is: what can you learn about real cars jumping across half open drawbridges from spheres moving on inclined planes in a vacuum? To answer this question, you have to face the task of translating facts about the model into claims about the actual situation, just like you did when you faced the task of redesigning a ship. Again, we will see in Section 4 how this should be done.

Despite their differences, both of these scenarios rest on a common style of reasoning. You investigate one system, a model, and use the results of that investigation to inform yourself about another system, a target. In the first case, the model is a concrete physical system itself; it is constructed out of steel (or wood, or paraffin wax, depending on the era) and the shipbuilder performs physical experiments directly on the model. In the second case, the model is a combination of an imagined scenario and mathematical equations (derived from Newtonian mechanics), which the stunt planner can investigate, and there are facts about this model and the solutions of its equations. But neither the shipbuilder nor the stunt planner is interested in their models per se; they are interested in what the models are about. So to reach the end point of their investigations they have to translate their model results into claims about another system: the full-sized ship moving through water and the car jumping the bridge.

The aforementioned examples are not isolated instances of some outlandish style of reasoning. Models are used across the sciences; they are one of the primary ways in which we come to learn about the world. Scientists construct models of atoms, black holes, molecules, polymers, populations, DNA, rational decisions, financial markets, climate change, and pandemics. Models provide us with insight into how selected parts or aspects of the world work, and they act as guides to action. Much of scientific knowledge, and understanding, is ultimately based on the results of some modelling endeavours.

How do models work? How can the investigation of a model possibly tell us anything about something beyond the model, some system out there in the world? Our answer here is that models do this because they represent their targets. Just as the scale model in the shipbuilder’s tank represents the full-sized ship and the stunt planner’s model represents the actual car, the physicist’s model of a black hole represents parts of the universe from which no light can escape; the economist’s macroeconomic model represents the actual economy of a given country; and the epidemiologist’s model represents how a disease will spread through a country as a result of various policy interventions by a government.

As these examples suggest, models can be used to represent particular target systems like a particular government’s economy, a specific ship, and so on. But they can also be used to represent types of targets. Depending on the details, economists might employ models to reason about economies in general; a physicist can use a model to represent a type of atom like hydrogen; the model ship can be used to represent a certain type of ship; and the mathematical model of 007’s stunt can also be used to represent a type of stunt involving car jumps across bridges (a few exemplars of which are mentioned in Footnote 2). So by ‘target system’ we can mean both specific systems and types of systems.

In each of these cases the model stands in for ‘the’ target system; the model is the secondary system that scientists investigate, with the hope that the results of their model-based investigations will deliver insight into their targets. So in order to understand how model-based reasoning works, we need to understand how models represent. In this Element, we provide a philosophical investigation into this question.

At this point, one might worry that our focus on models is too narrow. Scientists use plenty of other kinds of representations to reason about systems in the world: doctors use MRI scans to learn about brain structure; particle physicists pore over bubble chamber photographs to learn about the nature of subatomic particles; and astronomers study the images produced with telescopes. But it pushes the limits of language to deem any of these kinds of representations models. Moreover, there are plenty of non-scientific representations that function in a similar way. All of us are familiar with using maps to navigate new cities, and we regularly make inferences about the subjects of photographs based on features of the photograph. In general, we call a representation that affords information about its target an epistemic representation, and it is clear that models are just one kind of such representation. Given this, there is a question whether our analysis in this Element covers things beyond models; whether it covers epistemic representations more generally. By and large we think it does, and most of the existing accounts of how models represent end up being accounts of epistemic representation more generally.Footnote 3 In this Element, we primarily focus on models because models are crucial to the working of modern science, and because discussing different kinds of representations side by side would end up using more space than we have. We will briefly broaden our scope in Section 4, and readers who are interested in other kinds of epistemic representations – images, and certain works of art, for example – are encouraged to consider how what we say applies to these as they proceed through the following sections.

1.2 Questions Concerning Scientific Representation

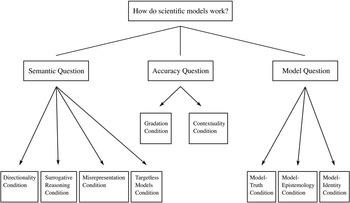

At first glance it might seem like there is only one question to be asked here: how does a model represent its target? But looking a little closer, we see that this question breaks up into several different questions. To have a clear focus in our investigation, it’s important to disentangle these and clarify how answers constrain the shape of the conceptual landscape concerning how models work. This is the project for this section, in which we lay out the relevant questions; in the next, we discuss what it takes to answer them appropriately.

The first, and most fundamental, question to investigate is: in virtue of what does a model represent its target? Call this the Semantic Question.Footnote 4 Answering this will allow us to understand how the steel vessel that is dragged through the towing tank comes to represent a real ship, and how the shipbuilder manages to translate results of model experiments into claims about a full-sized ship; likewise, it will allow us to understand how an imagined scenario consisting of two inclined planes and a sphere in a vacuum comes to represent a real-world bridge jump, and how the stunt planner manages to plan the jump based on the model.

It’s of paramount importance that we don’t confuse this question with a closely related one, namely: what makes a model an accurate representation? A model can represent its target, without doing so accurately. To see this, alter the aforementioned examples slightly. You may not have a very good initial idea of the ship’s shape because you haven’t been able to measure it up and no plans are available. So you may decide to use an empty barrel as a model of the ship. When you finally see the ship, you realise that this is a bad model because it doesn’t have the form of the ship at all. Nevertheless, the barrel is a representation of the ship; it’s just not an accurate representation. Or assume that an error has been made in measuring the angle of the open leaves of the drawbridge, and the angle in reality is twice the angle in your model. As a result, the model will underestimate the velocity needed to get to the other side, and the car, along with the stunt driver, will plummet into the river. If this happens, the model is not an accurate representation of the target system, but it is a representation of it nevertheless.

The lesson is that we should distinguish between the question of what turns something into a representation of something else to begin with (the Semantic Question), and the question of what turns something into an accurate representation of something else. Call the latter the Accuracy Question. The Semantic Question is conceptually prior in that asking what makes a model an accurate representation presupposes that it is a representation in the first place: a model cannot be a misrepresentation unless it is a representation. But once this has been established, there is a genuine question about what it takes for a representation to be accurate.

The distinction between representation and accurate representation is not an artefact of the simple examples we have used to illustrate it. The history of science provides us with a wealth of examples of inaccurate, but nevertheless representational, models. Ptolomy’s model of the solar system represents the solar system even though it is inaccurate with respect to the orbit of the Earth around the Sun. Thompson’s ‘plum pudding’ model represents atomic structure, but it is inaccurate with respect to the distribution of charge within an atom. Fibonacci’s model of population growth is inaccurate with respect to the long-term growth of a population because it assumes that organisms are immortal and food supplies are unlimited. But despite their inaccuracies, all of these models represent their targets.

So far in this section we’ve distinguished between the question of what makes a model represent, and what makes it accurate. But some might worry that there’s an even more prior question lurking in the background: what is a ‘model’? Call this the Model Question. In the case of the model ship the answer is relatively straightforward: the model is the concrete material object towed through the tank. But many models aren’t like this; they are, to use Ian Hacking’s memorable phrase, things that we ‘hold in our heads rather than our hands’ (1983, 216). It has become customary to refer to such models as ‘non-concrete’ models. The model of the car jump is of this kind. Earlier we said it was an imagined scenario combined with mathematical equations from Newtonian mechanics, and we also said that there were facts about this model that the planner would investigate. One option then, would be to identify this model, and others like it, with mathematical entities (and then leave mathematicians and philosophers of mathematics to work out what they are). But there’s a lingering worry that this isn’t the whole story. The car jump model might involve mathematics, but it’s not obviously purely mathematical. Before writing down equations, the stunt planner had to imagine a scenario with a sphere moving in the vacuum on an inclined plane. And it’s not clear that this can be accounted for by identifying the model with something purely mathematical. A philosophical account of modelling should have something to say about how we should think about models of this kind. This goes beyond metaphysical bookkeeping; as we will see, how one answers the Model Question has implications for how we understand the Semantic Question and the Accuracy Question.

1.3 What Does Success Look Like?

The next thing to establish is the success conditions on answers to these questions. What counts as a successful answer to the aforementioned questions?

We begin with conditions on answers to the Semantic Question. First and most straightforwardly, there is a direction to the representation relationship that holds between models and their targets. Typically at least, models represent their targets, but not vice versa: the scale model in the towing tank represents SS Monterey, but SS Monterey doesn’t represent the scale model. We say ‘typically’ here because we are not assuming that it’s a conceptual impossibility; in some special cases a representational relationship can hold both ways. Rather, we require that any answer to the Semantic Question should not entail that the model–target representation relation is always symmetric. We call this the Directionality Condition.

Another important condition of success on answering the Semantic Question is accounting for the fact that models are informative about their targets. Some representational relationships do not work this way: the term ‘atom’ can be seen as representing (at least in some sense) atoms, but it’s uninformative: no investigation into the term itself will allow us to extract any information about what it refers to. In contrast, a model represents its target in a way that does allow such information extraction (although, of course, that information doesn’t have to be true, because models don’t have to be accurate). To use Reference SwoyerSwoyer’s (1991) phrase, models allow for surrogative reasoning: by investigating the behaviour and features of the model, scientists can generate claims about the behaviour and features of its target. We call this the Surrogative Reasoning Condition.

The distinction between representation and accurate representation motivates a further condition for success: any answer to the Semantic Question should be compatible with the fact that models can misrepresent their targets; no answer should entail that all representations are accurate, nor that inaccurate models are non-representations. In brief, a viable answer to the Semantic Question must distinguish between misrepresentation and non-representation. This is not to say that all models are inaccurate (although there is reason to think that no model represents with perfect accuracy). Indeed, much of the motivation for investigating how models work stems from the fact that at least some of them are accurate; some of them are paradigmatic instances of the cognitive success of the scientific endeavour. But it remains that some models misrepresent, and thus whatever it is that establishes a representational relationship should not equate representation with accurate representation. We call this the Misrepresentation Condition.

The previous condition relates to models that represent actual targets in the world (whether specific systems, or types of systems), but do so inaccurately. We should also recognise that some models don’t represent any actual target whatsoever. Straightforward examples of models of this sort include engineering models of structures never built. Vary our initial example slightly and assume that you are tasked with designing a new ship rather than redesigning an existing one. But for some reason the ship is never built. In that case your model doesn’t represent anything in the world. The same goes for architectural models of buildings that have never been constructed and models of spacecraft that have never been realised. Targetless models aren’t unique to fields that are in the business of constructing something; such models also appear in theoretical science. Population biologists construct and investigate models involving a population consisting of four different sexes to see how such a population would develop; elementary particle physicists study models of particles that don’t exist to learn about techniques like renormalisation; and philosophers of physics construct models in accord with the principles of Newtonian mechanics in order to demonstrate that the theory is consistent, under certain conditions, with indeterminism, without the model representing, or indeed being intended to represent, any system in the world.Footnote 5 A philosophical account of modelling should accommodate models that don’t have real-world targets. We call these ‘targetless models’, and the condition that they be allowed for the Targetless Models Condition.

We now turn to the Accuracy Question. Unlike truth, which (many people think) is an all-or-nothing matter, accuracy comes in degrees. Models can be more or less accurate, depending both on the scope of the features they represent, and on how well they represent those features. And the fact that a model misrepresents some aspects of its target doesn’t entail that it misrepresents all aspects. For example, whilst the Ptolemaic model of the solar system might misrepresent the structure of the celestial orbits, it accurately represents, to some degree, the relative position of the celestial bodies as seen in the night sky from the surface of the Earth. So its accuracy falls somewhere between perfect accuracy and total misrepresentation. Any tenable notion of accuracy must allow for, and indeed make sense of, such gradations. We call this the Gradation Condition. This condition comes into contact with the Misrepresentation Condition discussed earlier. The less accurate a model, the more it misrepresents. So requiring that any answer to the Semantic Question allow for misrepresentation entails requiring that any answer to the Accuracy Question allow for there being models with low-grade accuracy, and vice versa.

What counts as accurate depends on the context in which a model is used, and on the aims and purposes of the model user. An architectural model that provides measurements that are correct within a ±5 mm error margin is extremely accurate; a molecular model that predicts the extension of a molecule with a precision of ±5 mm is an egregious failure. Indeed, the very same model may count as accurate for one purpose, but not for another. This happens, for example, when context restricts which features of a target system must be represented accurately in order for the model to count as accurate. As Kuhn notes, in the context of navigation and surveying, the Ptolemaic model is still employed today, and the model is accurate for these purposes (Reference KuhnKuhn 1957, 38). But in the context of using the same model to explain why planets appear where they do, the model is inaccurate. In general, an assessment of what counts as accurate must take contextual factors into account. We call this the Contextuality Condition.

The Model Question comes with its own associated conditions of success. Recall what it asks: what are models? Any answer to this question has to help us understand what scientists are talking about when they’re talking about their models, especially when they are talking about the model as having an, in some sense, independent existence from any target system it represents.

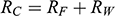

There is right and wrong in the model: certain claims are true in the model and some are false. A first condition on a successful answer to this question therefore is that it provide an account of truth in a model, model-truth for short, and accordingly we call claims that are false in a model model-false. In certain cases, model-truth corresponds to what we standardly mean by ‘truth’. To say that it is model-true that the concrete ship model experiences a complete resistance of a certain strength

![]() is the same as to say that it is true that it experiences that resistance. In general though, model-truth is not the same as truth simpliciter. The claim ‘the sphere moves on an inclined plane’ is model-true, but false in the world (there is no such sphere!). Moreover, it is model-false that it loses mass on the way. But on what basis are some propositions model-true, and others model-false? As we have seen previously, there is no sphere moving up an inclined plane, and so the claim cannot be true in the same sense in which ‘the car moves on Tower Bridge’ is true.

is the same as to say that it is true that it experiences that resistance. In general though, model-truth is not the same as truth simpliciter. The claim ‘the sphere moves on an inclined plane’ is model-true, but false in the world (there is no such sphere!). Moreover, it is model-false that it loses mass on the way. But on what basis are some propositions model-true, and others model-false? As we have seen previously, there is no sphere moving up an inclined plane, and so the claim cannot be true in the same sense in which ‘the car moves on Tower Bridge’ is true.

This question is pressing for two independent reasons. First, we often attribute material features, like having a certain velocity or being acted on by gravity, to non-concrete models. But if these models aren’t actually existing physical objects, then in what sense, if any, can they have such features? Notice that this problem remains even if one answers the Model Question by identifying such models with mathematical systems: even though mathematical objects exist in some sense, they’re not obviously the sort of things that can have material features (gravity doesn’t act on mathematical objects!). Second, let us call the description that is used to introduce the model the model-description. Sometimes we can settle the question of whether a proposition is true in the model by appeal to the model-description: if the proposition is contained in the model-description it is model-true. But we often care about model-truths that the model-description remains silent about. We might say that ‘the sphere moves on an inclined plane’ is model-true because the model-description says that it does. But the model-description does not contain anything like ‘the sphere moves in a parabolic orbit once it leaves the inclined plane’, and so this route is foreclosed in this case. Since we are mainly interested in claims that are not explicitly stated in the original model-description (recall that the main result that the model delivers is Equation (3), which is not stated in the model-description!), an answer to the Model Question must help us understand how claims made about models, including those attributing material features to them, can be model-true (or model-false). Call this the Model-Truth Condition.

Modellers spend a lot of time investigating model-truths. Models are tools of investigation and they can serve this purpose only if the users of models can come to know what is true in the model and what isn’t. If there is no way for you to find out what resistance the model ship experiences when it’s dragged through the tank, or how far the sphere will fly once it’s airborne, then the models are useless. For this reason, the next condition concerns the epistemology of models. Any story concerning how to understand model-truth must be accompanied by a story about how we can come to know these model-truths and how we justify our findings. Call this the Model-Epistemology Condition.

Third, an answer to the Model Question should provide identity conditions for models: under what conditions are scientists talking about, and investigating, the same model? The ship model can be made from metal or from wax. So they would be different material objects, but would they still be the same model? Problems concerning identity also arise with non-concrete models. It’s commonplace that one can describe the same object in many different ways, and this also goes for models. Someone else could have described the car jump model using a different set of sentences than the ones used at the beginning of this section, and yet they could have described the same model. But on what grounds do we assert that this alternative model-description would really describe the same model? In the case of non-concrete models, we can’t clear up potential ambiguities by simply pointing to an object and say ‘that’s what I’m talking about’. Non-concrete models are given to us only through model-descriptions, and so there is a question about when two model-descriptions specify the same non-concrete model. Any answer to the Model Question must provide the conditions under which models are identical. Call this the Model-Identity Condition.

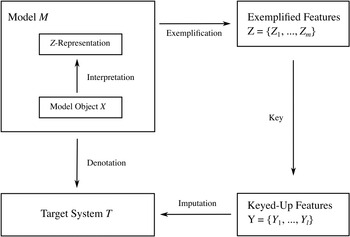

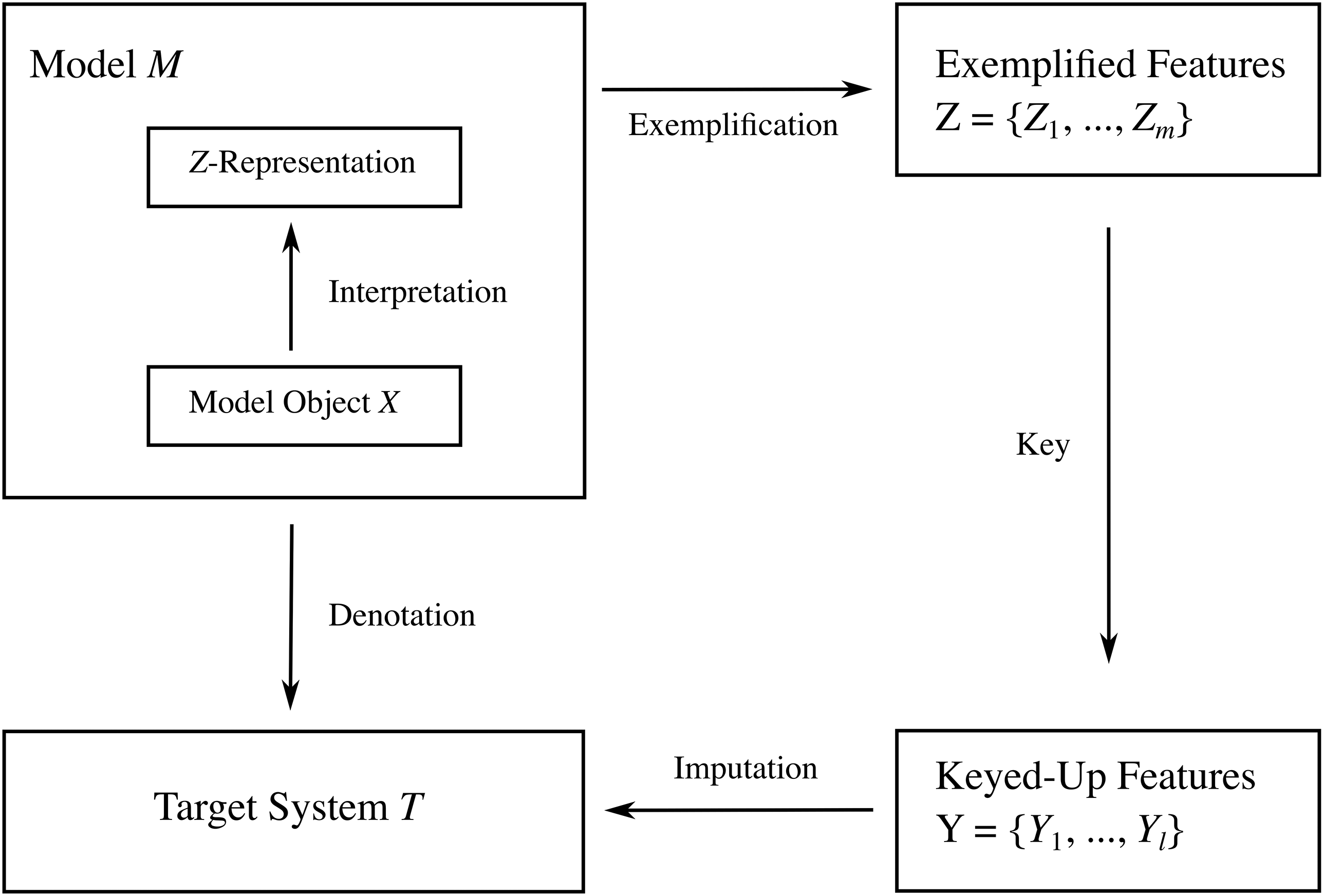

The questions and conditions that we have introduced so far, along with the relations between them, are summarised visually in Figure 3. We think that these are some of the most important questions and conditions, but we do not algorithmically consider them in order for each account in what follows. It is recognised that competing accounts of scientific representation engage with these questions and conditions in different ways, and as such we follow the existing literature in emphasising which of them are particularly pertinent for the accounts in question. Moreover, we don’t claim that this list is exhaustive. Indeed, in Reference Nguyen and FriggFrigg and Nguyen (2020) we discuss a number of additional issues that concern the use of mathematics in the empirical sciences, the use of different representational styles, and the relation between scientific representation and other kinds of representation, including works of art. A discussion of these issues presupposes answers to the questions we have introduced in this section, and so dealing with the issues we have introduced here provides the groundwork for further discussions.

Figure 3 The questions and conditions an account of representation must answer. The simple lines indicate that the question at the top breaks up into three different questions; the arrows indicate that a viable answer to the question must meet the condition that the arrow points to.

1.4 Roadmap

In the coming sections we investigate different accounts of scientific representation discussed in the philosophical literature.

In Section 2, we discuss resemblance accounts of scientific representation. These accounts are based on the idea that models, in some sense, resemble, or are supposed to resemble, their targets. We use ‘resemblance’ as an umbrella term for any kind of likeness between a model and its target. Some accounts focus on a kind of resemblance that is based on material features, the kind we invoke when we say, for instance, that London buses are similar to phone boxes because they are both red. We call resemblance of this kind similarity. An alternative version of resemblance understands model–target resemblances in terms of structural relationships, which are specified by different mappings, such as isomorphisms, between the two.

As we will see, such accounts of representation are most plausible when the resemblances in question are supposed to answer the Accuracy Question, with proposed resemblances answering the Semantic Question. However, a major question remains whether there is a way of explicating what ‘resemblance’ means that is robust enough to capture the sort of model–target relationships that are relevant across the sciences. As we discuss in that section, there are various models (including the ship model discussed earlier), where scientists explicitly exploit model–target mismatches in order to use the former to reason (and reason successfully) about the latter. As such, resemblance alone cannot provide an exhaustive answer to Accuracy Question, and therefore proposed resemblance cannot provide such an answer to the Semantic Question.

In Section 3, we discuss inferentialist accounts of scientific representation, which take the fact that models generate inferences about their targets as conceptually primitive. In a sense, such accounts reverse the roles played by the Semantic Question and the Surrogative Reasoning Condition: it’s precisely that models allow for surrogative reasoning that makes them representational, rather than the other way around. Such accounts have an interesting precedence in the philosophy of language and theories of truth, and we explore the extent to which they can be successfully deployed for understanding how models represent. We ultimately conclude that lessons from other areas of philosophy fail to motivate the idea that the Semantic Question can be put aside in its entirety, and that inferentialist accounts leave important questions open.

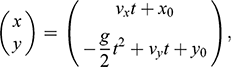

In Section 4, we present our own preferred account of scientific representation. We introduce the account via a close analysis of how you would reason were you to use the ship model to generate information about SS Monterey: you use the model to denote the target ship; the model exemplifies various features; you deploy a key, which turns exemplified features into features that are reasonable to impute to the target ship. This provides the ‘DEKI’ account of scientific representation. We explicate what each of these conditions mean, and we demonstrate how they can be combined to yield a complete account of scientific representation that answers each of the questions presented in this section in a way that meets the associated conditions. We then turn to the bridge jump model as another illustrative example, this time one involving a non-concrete model, paying attention to how the details of the conditions (the key in particular) are realised in that case. This demonstrates how the account is ‘skeletal’ in the sense that its conditions need to be filled in on a case-by-case basis in order to play an informative role. In providing examples of how this is done, we hope to show the practical value of thinking about epistemic representation through the lens we provide. Our account provides more than conceptual bookkeeping; it offers normative lessons for the ‘best practices’ in scientific modelling, and it suggests refocusing at least some of our philosophical investigations into how models work. To understand a model, we need to go beyond the model itself; we need to understand the disciplinary contexts and practices in which scientists reason with the model, and the rules that they implicitly subscribe to in doing so.

2 Resemblance and Representation

2.1 Introduction

It’s easy to be led to the idea that representation has something to do with resemblance: what makes a photograph a representation of its subject if not the fact that the two look alike? And philosophical accounts of representation that invoke resemblance have a long history, going back to Plato’s The Republic (Book X).

Scientific representation also appears to have something to do with resemblance. Consider the examples in the previous section. The scale model of the ship resembles the full-sized ship in some respects. After all, when you designed the model, you ensured that it had the same geometric shape as its target. Moreover, this resemblance isn’t accidental to the way in which the model works: you relied on it when you drew inferences from the behaviour of the model to the behaviour of the target. If the model were a different shape, then it would be unlikely that you would be able to extract any useful information about the full-sized ship from the model.

The same considerations apply to the model of the car jump. Here the model–target resemblance doesn’t concern what the two look like. But nevertheless, the model has a certain structure that, in some sense at least, seems to resemble the structure of the target. Both involve an object moving on an inclined surface, with a certain trajectory determined by the influence of gravity and Newtonian laws of motion. And when you reason with the model as a stunt coordinator, you work hard to ensure that the model trajectory matches the trajectory of the car. Without this, you threaten to put the lives of the stunt performers at serious risk.

These sorts of considerations suggest that when a model user draws surrogative inferences about the model’s target, they exploit the fact that the model and the target resemble one another. And given the tight connection between surrogative reasoning and scientific representation, this would suggest that these resemblances have something to do with how scientific models represent. The question, then, is where in the conceptual landscape introduced in the previous section should we locate resemblance? Does it answer the Semantic Question or the Accuracy question? And what sort of constraints does it place on answers to the Model Question?

In order to start addressing these questions, we first need to establish what we mean by ‘resemblance’, which, as it turns out, is no straightforward task (Section 2.2). Once we’ve explicated some options, we can then turn to investigate how resemblance might be put to work in helping us understand scientific representation (Section 2.3). The most viable suggestion is that resemblance relationships be invoked to answer the Accuracy Question: a model is accurate to the extent that it resembles its target (Section 2.4). Finally, we turn to the Model Question and see what restrictions the resemblance view imposes on the ontology of models, and indeed target systems (Section 2.5).

2.2 Resemblance

In order to understand how resemblance relates to representation, we first have to clarify what it takes for two systems to ‘resemble’ one another. And this is more than philosophical bookkeeping: as we will see, there are serious issues with relying on an intuitive understanding of the concept. In general, two things resembling each other means that they share some features. The model ship resembles the full-sized ship in the sense that they have the same shape; a London bus resembles a London phone box in the sense that they are of the same colour; and the copy of The Sunday Times on the front doorstep resembles all the other copies that day in the sense that they are made out of the same material and exhibit the same distribution of ink on paper.

For our current purposes it is useful to distinguish between two different kinds of features that can be relevant to resemblance. First, we can consider the sorts of homely material features we have in mind when we say that two objects are similar to one another. These features include visual features like colour, shape, and distribution of ink on paper, but in terms of scientific models they also include more general material features like having a certain volume, being subjected to a certain resistance, being acted on by gravity in a certain way, and so on. Second, we can focus on ‘structural’ features, which, broadly speaking, concern the arrangement of features rather than the features themselves.

We return to material features later in the text. At this point it is important to say more about what is meant by structural features. In the context of thinking about model-based science, there is a rich tradition in thinking about models as structures. These are mathematical entities, consisting of a domain made up of a set of elements and an ordered set of relations defined over them.Footnote 6 Thinking about model–target relations in these terms amounts to thinking about resemblance with respect to their structural features. For our current purposes, what’s important about this notion of structure is that the intrinsic nature of the elements of the domain, and the intrinsic nature of the relations defined upon them, are of no consequence. Consider, for example, a system consisting of a grandfather, his daughter, and her son. The structure whose domain is those three people, with the relation is a descendant of is the exact same structure as the structure with the same domain and with the relation is younger than. And if we consider three books of different lengths and the relation has fewer pages than, the books have the same structure as our family. Even though the ‘meaning’ or ‘intension’ of the relations is different in each case, they are the same from an abstract point of view: there are three objects with a relation on them, and that relation is irreflexive, asymmetric, and transitive. So we can say that a structure consists of dummy objects (because it only matters that there is something and it doesn’t matter whether the something are people or books or anything else) with purely extensionally defined relations on them (because it only matters between which objects the relation holds and not whether the relation is is a descendant of, is younger than, or has fewer pages than).Footnote 7

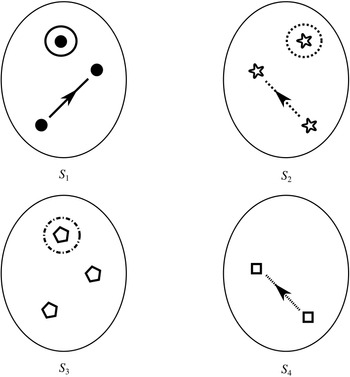

If we have two structures, we can ask whether they resemble one another with respect to their structural features. One way to make this precise is to ask whether there exists a function from the domain of one of the structures to the domain of the other (i.e. a way of associating each element of the former with one element of the latter) that preserves the relations defined on the former. One particularly important kind of such function is an isomorphism, which requires complete agreement with respect to structural features. To illustrate this, consider the four structures symbolically represented in Figure 4.

Figure 4 Four different structures

![]() consists of a domain of three objects (symbolised by the dots), with the top object having a property (symbolised by the black circle around it), and the bottom and middle objects being related by a two-place relation (symbolised by the line with the arrow running from one to the other).

consists of a domain of three objects (symbolised by the dots), with the top object having a property (symbolised by the black circle around it), and the bottom and middle objects being related by a two-place relation (symbolised by the line with the arrow running from one to the other).

![]() ,

,

![]() , and

, and

![]() are also structures, with different domains and properties and relations defined on them (where objects, properties, and relations are symbolised in the same way as in

are also structures, with different domains and properties and relations defined on them (where objects, properties, and relations are symbolised in the same way as in

![]() ).

).

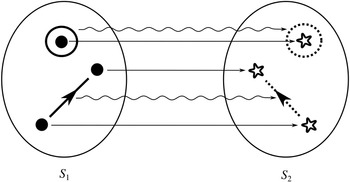

We can see that

![]() and

and

![]() share their structural features in the sense that they are isomorphic: they both consist of three objects, a property, and a two-place relation, and these properties and relations are distributed across the domains in the same way. This can be made precise by considering the arrows from

share their structural features in the sense that they are isomorphic: they both consist of three objects, a property, and a two-place relation, and these properties and relations are distributed across the domains in the same way. This can be made precise by considering the arrows from

![]() to

to

![]() in Figure 5. The straight arrows associate each element of the domain of

in Figure 5. The straight arrows associate each element of the domain of

![]() with a unique element of the domain of

with a unique element of the domain of

![]() in such a way that no two objects in

in such a way that no two objects in

![]() map to the same object in

map to the same object in

![]() , and all of the objects in

, and all of the objects in

![]() have a corresponding element in

have a corresponding element in

![]() . This means that the arrows specify a mapping that is a bijection. The wiggly arrows symbolise how this function preserves the structure of the property and relation in

. This means that the arrows specify a mapping that is a bijection. The wiggly arrows symbolise how this function preserves the structure of the property and relation in

![]() . The object with the property is mapped to an object with the corresponding property (the dotted circle in

. The object with the property is mapped to an object with the corresponding property (the dotted circle in

![]() ), and the objects related by the two-place relation are mapped to objects also related by a two-place relation (the dotted line with the arrow in the middle in

), and the objects related by the two-place relation are mapped to objects also related by a two-place relation (the dotted line with the arrow in the middle in

![]() ). The fact that the function is a bijection that preserves the property and relation means that it is an isomorphism. So,

). The fact that the function is a bijection that preserves the property and relation means that it is an isomorphism. So,

![]() and

and

![]() are isomorphic to each other; they share the exact same structural features.

are isomorphic to each other; they share the exact same structural features.

Figure 5 Isomorphic structures

It is also clear that there is no such isomorphism between

![]() and either

and either

![]() or

or

![]() . If we compare

. If we compare

![]() to

to

![]() , there is no corresponding two-place relation in the latter and so no function from

, there is no corresponding two-place relation in the latter and so no function from

![]() to

to

![]() can preserve that relation. And if we compare

can preserve that relation. And if we compare

![]() to

to

![]() , we can see that any function from the former to the latter will have to be such that at least two elements map to the same element, and so there is no bijection between the two domains.Footnote 8

, we can see that any function from the former to the latter will have to be such that at least two elements map to the same element, and so there is no bijection between the two domains.Footnote 8

Now let’s return to the first notion of resemblance we discussed, resemblance with respect to non-structural, that is, material, features. This sort of resemblance is what we call ‘similarity’. In the first instance, we might then say that two objects are similar if and only if they share at least one feature. In the case of the model ship, the model and the target have the same shape, and so they count as similar.

Unfortunately, such an account of similarity is a non-starter. As argued by Reference QuineQuine (1969, 117–8) and Reference Goodman and GoodmanGoodman (1972, 443) in their influential critiques of the notion, the mere sharing of some feature makes similarity vacuous: if this were right, everything would be similar to everything else. To see why, consider some pair of objects that don’t seem to be similar to one another: a mug on the desk and the dog in the dog bed, for example. With a little ingenuity we can come up with a material feature that they share, for example, being located in East London. Thus, according to the proposal, they are similar to one another. This is not an artefact of the example: for any pair of objects it is not too difficult to specify some material feature that they share, even if we have to resort to things like being earthbound, being located in space-time, being thought about by you when you are reading this Element, and so on. Given that it’s too easy for any pair of objects to find a material feature they share, it’s too easy to conclude that they are similar.

One thing that has gone wrong here is that there is no restriction on which shared material features can be considered to establish the similarity relation. Rather than requiring that two objects share arbitrary features to be considered similar, we might require that they share relevant features, where what counts as ‘relevant’ depends on the context in which the similarity is being considered. If location isn’t relevant in a particular context, then the mug and the dog won’t count as similar (in that context) on grounds that they are located in East London, and likewise for the other examples.

In the context of accounts of scientific representation, this seems to be the notion of similarity that Giere has in mind when he states ‘since anything is similar to anything else in some respects and to some degree, claims of similarity are vacuous without at least an implicit specification of relevant respects and degrees’ (Reference GiereGiere 1988, 81).Footnote 9 By appealing to the role that context plays in specifying the features that are relevant to similarity, the issue of spurious similarities is avoided.

However, this account faces another problem concerning the distinction between two objects being similar in the sense that they share the exact same relevant feature and being similar in the sense that they each instantiate a feature, where the features themselves are ‘similar’.Footnote 10 To illustrate the latter, consider again the similarity between a London bus and a London phone box, where the relevant similarity concerns their colour. If we look closer, we’ll see that the bus and the phone box aren’t the exact same colour: the phone box is a shade darker than the bus. Hence, there’s no relevant colour property that they share, which is what would be required by the idea under consideration. Accordingly, it looks like we’re forced to conclude that the two aren’t similar with respect to their colour after all, which seems wrong. The similarity we are interested in here is located at the level of properties themselves: the red of the phone box is similar to red of the bus. But now we wonder whether, and if so how, this kind of similarity can be analysed in terms of two objects instantiating the same property.

Reference ParkerParker (2015), in her discussion of Weisberg’s account of scientific representation, provides another relevant example. Here the relevant similarity concerns whether the US Army Corps of Engineers’ physical scale model of the San Francisco bay area and the bay area itself are similar with respect to relevant hydrodynamical parameters such as their Froude number (a dimensionless parameter quantifying flow inertia). Due to various issues surrounding how dimensionless parameters are subject to scaling effects, the Froude number of the model and the Froude number of the actual bay aren’t exactly the same. But in certain modelling contexts, including the San Francisco bay case, the numbers count as similar as long as the model’s parameter is ‘close enough’ to the target’s parameter. The question then is whether shared features accounts can accommodate this kind of similarity.

Parker suggests that one way to make good on the fact that sharing similar, rather than the same, features still counts as a relevant similarity is to turn the former into the latter by introducing the idea of an imprecise feature (ibid.).Footnote 11 The rough idea is that if two objects instantiate similar but non-identical, features, then we can consider a more general feature which both of the features are instances of. When it comes to quantitative features (i.e. features that take numerical values) like the two Froude numbers, this can be done via introducing interval-valued features of the form: ‘the value of the feature lies in the interval

![]() ’ (ibid. p. 47), where

’ (ibid. p. 47), where

![]() is the value of the parameter in the target, and

is the value of the parameter in the target, and

![]() specifies how precise the overlap needs to be.Footnote 12

specifies how precise the overlap needs to be.Footnote 12

Whilst this might work for features of this kind, it is unclear whether this approach can be applied to all instances of similarity at the level of features themselves. The aforementioned approach won’t work in the context of qualitative features (i.e. features that don’t take numerical values), which require some other way of introducing imprecision. It’s not obvious what this would be (consider, e.g. the difficulty in assigning a number measuring how ‘rational’ an agent is, and using this number to measure the ‘similarity’ between a model-agent reasoning according to rational choice theory and an imperfect human agent).

Since none of the problems for the resemblance account of representation will depend on how this issue is resolved, let’s simply assume that this can be done in some way or another.

We hope it is now clear what we mean by ‘resemblance’. Where the resemblance concerns material features, we use the term ‘similarity’, and when it concerns the structure of the model and the target, we use the term ‘isomorphism’. With respect to the former, what’s important is that the context provides some restriction on which material features are considered relevant. Moreover, it requires careful treatment in cases where the similarity relationships in question are underpinned by the sharing of similar features, rather than co-instantiating the exact same feature. With respect to the latter, what’s important is that the comparison concerns the existence of structure-preserving mappings between models and their targets. With this in mind, we can now turn to how resemblance, thought of in either of these two ways, can be used to understand how scientific models represent.

2.3 The Semantic Question

A straightforward attempt to answer the Semantic Question by invoking the notion of resemblance is the following:

Resemblance: A model represents its target if and only if the two resemble each other.

Further to the previous section it is understood that resemblance can be either similarity (in relevant respects) or isomorphism. Such an answer has the benefit of meeting the Surrogative Reasoning Condition: if a model and its target are similar in the sense of sharing relevant features, then, from the fact that a model has a relevant feature, a model user can infer that the target does as well; and if a model and its target are isomorphic, then if the model has a certain structure, a model user can infer that the target does too.

Unfortunately, Resemblance fails to meet the other conditions, and has a number of other significant flaws. With respect to the Directionality Condition, it has been pointed out that resemblance has the wrong logical properties to establish representation: similarity and isomorphism are both reflexive (everything is similar to itself) and symmetric (if x is similar to y, then y is similar to x), but representation is not.Footnote 13 Due to symmetry, all targets also represent their models, which is not the case; and due to reflexivity, all representations are self-representations, which is wrong. Even if self-representation may occur in certain circumstances (Magritte’s The Treachery of Images provides a nice example), this is the exception rather than the rule. So the logical properties of similarity and isomorphism pose a problem for anyone appealing to resemblance to establish representation.

Resemblance fails to accommodate the Targetless Models Condition: if a model doesn’t have a target, then there is nothing it is similar to. So the proposal simply remains silent about how these models work. Defenders of a resemblance view would then have to provide some further analysis of how such models represent, if they do at all.

The next problem has gained attention due to Reference PutnamPutnam (1981, 1–3).Footnote 14 In a thought experiment he invites us to imagine an ant tracing a line in the sand that just so happens to resemble Churchill. Putnam asks: does the trace represent Churchill? According to him, the answer is ‘no’. The ant has never seen Churchill, indeed has no connection to him whatever, and certainly didn’t have the intention to represent him. Putnam concludes that this shows that ‘[s]imilarity … to the features of Winston Churchill is not sufficient to make something represent or refer to Churchill’ (ibid., 1).Footnote 15 What is true of the trace and Churchill is true of every other pair of items that resemble one another: resemblance on its own does not establish representation.

A final problem is even more damning. The Misrepresentation Condition requires that models can represent their targets, but do so inaccurately.Footnote 16 But if models have to resemble their targets in order to represent them, either via sharing relevant features or being related by an isomorphism, then this precludes the possibility of misrepresentation. If a model has to share some feature with its target to represent it as having that feature, then it cannot be mistaken about whether or not the latter has it (of course, the model could be accurate with respect to another feature, but that doesn’t help Resemblance explain how the model misrepresents features it purports to represent). Alternatively, if a model has to share the relevant structure with a target in order to represent it (which is required by the existence of an isomorphism), then it cannot be mistaken about whether the target has that structure.Footnote 17 Thus, Resemblance has difficulty meeting the Misrepresentation Condition: representation is conflated with accurate representation, and cases of misrepresentation are misclassified as non-representation.

For these reasons, Resemblance is a non-starter. However, the fact that resemblance allows for successful surrogative reasoning in cases where models are accurate representations suggests that it may feature somewhere else in an account of representation. The idea is the following: the intentional act of a model user proposing that a model resemblances its target can be deployed to answer the Semantic Question, and then whether or not the two are in fact similar (the proposal can be true or false) can be used to answer the Accuracy Question. This delivers the following response to the Semantic Question:

Proposed Resemblance: a model represents its target if and only if a model user proposes that the two resemble each other.

Again, it is understood that resemblance can be either similarity or isomorphism. Such an answer seems plausible, and we’ll address how it relates to accuracy in the next section, but for now a few comments on how it fares as an answer to the Semantic Question are in order.

A model user’s proposal that a model resembles its target is what Giere calls a ‘theoretical hypothesis’, a statement of the form ‘the model and the target resemble each other in these respects (structural or otherwise)’ (Reference GiereGiere 1988, 81). These hypotheses may also depend on the purposes for which the model is being deployed (i.e. model users may deploy different hypotheses in different contexts), and on the intentions of the model user. For example, Giere further develops this account and states that we should analyse how models represent using the following schema: ‘S uses X to represent W for purposes P’ (Reference Giere2004, 743), or in more detail: ‘Agents (1) intend; (2) to use model, M; (3) to represent a part of the world W; (4) for purposes, P. So agents specify which similarities are intended and for what purpose’ (Reference GiereGiere 2010, 274). Other authors have presented similar views. Van Fraassen offers the following as the ‘Hauptstatz’ of a theory of representation: ‘There is no representation except in the sense that some things are used, made, or taken, to represent things as thus and so’ (Reference van Fraassen2008, 23, original emphasis). Bueno submits that ‘representation is an intentional act relating two objects’ (Reference Bueno, Magnus and Busch2010, 94, original emphasis), and Bueno and French point out that using one thing to represent another thing is not only a function of (partial) isomorphism but also depends on ‘pragmatic’ factors ‘having to do with the use to which we put the relevant models’ (Reference Bueno and French2011, 885).

According to this way of thinking then, it is the activities of model users, in offering theoretical hypotheses proposing resemblances, that answer the Semantic Question. So how does Proposed Resemblance fare with respect to the conditions of adequacy offered in the introduction? Pretty well, as it turns out. The Surrogative Reasoning Condition is met via that fact that in proposing a resemblance, a model user can infer from the fact that a model has a certain feature (structural or otherwise) to the claim that the target shares that feature. And since theoretical hypotheses can be true or false, it allows for the fact that the target might fail to have that feature, thereby accommodating cases of misrepresentation.

Proposed Resemblance also avoids at least some of the issues with the logical properties of resemblance discussed earlier: people typically don’t offer theoretical hypotheses according to which some object resembles itself, so reflexivity is avoided. Whether it avoids the issue arising from symmetry is less clear. Plausibly, proposing that x resembles y seems to engender a commitment that y resembles x, but for our current purposes we’ll put this aside.

Moreover, the accidental resemblances that featured in Putnam’s thought experiment no longer pose a problem for this way of thinking about representation: the ant’s trace doesn’t represent Churchill because no one, let alone the ant, proposes that the two are similar. All in all, this way of thinking about the Semantic Question seems like it has potential, although it is worth nothing that, as with Resemblance, Proposed Resemblance has nothing to say about targetless models.

One of the main arguments in its favour is how Proposed Resemblance accommodates surrogative reasoning: a model user can exploit a proposed resemblance to infer that a target has a feature from the fact that the model does. But a question remains whether this suffices to accommodate all cases of surrogative reasoning. Giere suggests that he’s open to other ways when he describes how models allow for such reasoning: ‘[o]ne way, perhaps the most important way, but probably not the only way, is by exploiting similarities between a model and that aspect of the world it is being used to represent’ (Reference Giere2004, 747, emphasis added). Giere does not expand on what other ways he has in mind, but given that structural relations like isomorphism play no role in his discussion, it is unlikely that this is what he had in mind. If so, this amounts to the admission that there are kinds of representation that are not based on resemblance. We agree, and we will encounter cases of this kind in the next section and in Section 4. But if there are such forms of representation, then Proposed Resemblance cannot be a complete answer to the Semantic Question.

2.4 The Accuracy Question

Can resemblance be invoked to answer the Accuracy Question? The way in which resemblance (of relevant features, structural or otherwise) could be so used should now be clear: in proposing that a model resembles a target with respect to some feature, a model user establishes that the model represents the target as having that feature; if the target does in fact resemble the model with respect to that feature, then the model accurately represents the target with respect to that feature.

Such an answer meets our success conditions introduced in the introduction. The fact that context is required in specifying the features relevant to the resemblance means this answer meets the Contextuality Condition.Footnote 18 In a context where the Ptolemaic model is proposed to be similar to the solar system only with respect to how the celestial bodies appear in the sky, the two are so similar, and thus the model is accurate with respect to that feature. But in a context where the model is proposed to be similar to the target more generally, including with respect to features like the trajectory of the orbits themselves, the two are not so similar (since in the model the planets orbit the earth, and in the actual solar system they all orbit the sun) and so the model is inaccurate in those respects.

This answer also meets the Gradation Condition, in fact in multiple ways. A model user may propose more and more relevant similarities between a model and a target, allowing for more and more accurate representation if those resemblances hold.Footnote 19 Alternatively, if a model user proposes some fixed collection of relevant features, the extent to which the model actually resembles its target can also come in degrees, either in the sense of resembling the model only with respect to some of them or in terms of the importance of the resemblances in question or in terms about how similar the model and the target are with respect to those features, when similarity is understood at the level of the features themselves (cf. the discussion of imprecise features in Section 2.1). So far so good.

The question then is whether proposed resemblance underpins all instances of successful surrogative reasoning – this is the issue that arose at the end of the previous section. If it’s not, then resemblance cannot be a complete answer to the Accuracy Question. As we’ve already indicated, we think that there is reason to doubt that resemblance can play this kind of universal role. Let’s start with an easy (and by now well-rehearsed) example before discussing a bona fide scientific case.

Consider the London Tube map. It’s a two-dimensional array of dots, representing tube stations, connected by different coloured lines, representing tube lines. Anyone with some familiarity with such maps finds it easy to use the map to navigate the underground system. A user identifies the dot representing the station where they’ll begin their journey, the dot representing their desired destination station, and then they can determine various different possibilities for which lines they should take, and where they need to change. For example, if you want to travel from Leyton in East London to Victoria in Central London, then you can take the route represented by the red (Central) line connecting the dot representing Leyton to the dot representing Oxford Circus, followed by the light blue (Victoria) line connecting the latter to the dot representing Victoria. Using the map to plan such a journey is exactly what we mean by ‘surrogative reasoning’.

What relationships between the map and the underground system does the map user exploit when performing such reasoning? It is commonplace to point out that the tube map represents the topology of the underground (i.e. the way in which the stations are connected to each other).Footnote 20 Moreover, the topology of the map is the same as the topology of the tube system, and in this sense, when model users reason about topological features of the latter by investigating the former, they exploit this resemblance, something that Proposed Resemblance captures nicely. But there are other relationships that are important too. A map user also cares about which specific coloured lines represent which specific underground lines, which is not a topological feature. They know that the red line represents the Central line, that the light blue line represents the Victoria line, and so on. So when the traveller was planning their trip from Leyton to Victoria, they knew more than ‘I need to change at Oxford Circus’, they knew that they needed to change onto the Victoria Line at Oxford Circus. To that end they need to understand the colour coding of the tube lines – specifically, they need to know that the light blue line represents the Victoria Line. But the relationship between colours on the map and underground lines in the world are purely conventional, and there’s no sense in which the colour light blue is co-instantiated by, or similar to, the features that distinguish the Victoria Line from others. So this style of surrogative reasoning doesn’t seem to proceed via exploiting resemblances.Footnote 21

Conventional elements in representations are not limited to mundane representations like tube maps; they are also used in proper scientific contexts. As an example, consider fractal geometry. Most readers will be familiar with colourful pictures of the Mandelbrot set, which have become so popular that one can even find them printed on T-shirts.Footnote 22 The function of the colours in these pictures goes beyond the aesthetic. In fact, they are a colour code. One starts by considering an iterative function that takes as input parameter a complex number c. One then asks, for a particular number c, whether the function converges or diverges, and if it diverges, how fast it does so. The result of this is then colour coded: if the function converges for c, then the point in the plane representing c is coloured black, and if the function diverges, then a shading from yellow to blue is used to indicate the speed of divergence, where yellow is slow and blue is fast (Reference Argyris, Faust and HaaseArgyris et al. 1994, 663). Of course, a different colour coding can be used. The association of certain colours with certain speeds of divergence is entirely conventional. Nevertheless, the picture provides important scientific information, and it does so without invoking any similarity between the representation and the target – divergence speeds are not similar to colours!

What this suggests is that there are some forms of surrogative reasoning that exploit non-resemblance relationships between representations and their targets. In this case, a particular feature in a map or the fractal image (i.e. a colour) is readily understood as representing some distinct feature in its target (i.e. a tube line or a speed of divergence), but without invoking a resemblance relation. Here the model–target feature associations that are relied upon are based on convention. In other cases the underlying association may be more complex. The ship example in the introduction illustrates this. We will go over the details in Section 4, but it pays to have a brief look at some details of the case now to see that in order to perform (successful) surrogative reasoning, a model user should associate model features with features of their targets in a way that doesn’t rely on resemblance alone.

Recall the scenario. You’re a shipbuilder tasked with determining what size engine a ship needs to be able to travel at a certain speed. You have built a scale model of the ship, which is the same geometric shape as the ship, but is much smaller. Suppose that the model is a 1:s scale (e.g. 1:100) of the full-sized ship, which means that each of its linear dimensions (e.g. length, width, and height) are all 1/s the size of the full-sized ship. You tow your scale model through a tank and measure the complete resistance,

![]() , that it faces, which in turn allows you to calculate how big an ‘engine’ (i.e. how much force you need to tow it with) the scale model ship needs to move at various speeds. The question then is how can you translate the results of your investigation into something that’s informative about the full-sized ship itself?

, that it faces, which in turn allows you to calculate how big an ‘engine’ (i.e. how much force you need to tow it with) the scale model ship needs to move at various speeds. The question then is how can you translate the results of your investigation into something that’s informative about the full-sized ship itself?

The first thing you might ask is: if you performed your experiment on the model ship at various velocities, which one is relevant for determining the behaviour of its target? A defender of resemblance might think: the same velocity at which the full-sized ship will travel. This turns out to be wrong. What’s important when performing these model experiments is not that the model and the target move at the same velocity, it’s that they create the same wave pattern, and when the two are different sizes, this requires different velocities. We’ll go into more detail about this in Section 4, but for now what’s important is that in order to ensure that the model ship creates the same wave pattern as the full-sized ship, the relevant velocity needs to be scaled by the square root of the ratio of their lengths.

The next question is: if we determine the wave-making resistance faced by the model ship, how do we translate that to the wave-making resistance faced by the full-sized ship? A naïve defender of resemblance might say: we need to scale the resistance proportionally to the scale s of the model. This is wrong again. As it turns out, the way in which wave-making resistance changes across scales depends on the ratio of the cubes of the linear dimensions, that is, the ratio of the amount of water displaced by the model ship and full-sized ship (again, we’ll go into more detail about this in Section 4).

So in order to perform the right model experiment, and in order to translate its results into true claims about the target ship, the shipbuilder needs to ensure that the model and the target do not resemble one another with respect to their velocities (not even in the imprecise way discussed earlier in Section 2.2) and needs to scale the wave-making resistance faced by the ship in a way that depends on the ratio of the cubes of their lengths. The point then is that in order for the model ship to accurately represent the full-sized ship, the shipbuilder needs to perform some relatively complex calculations on the results of the model experiments, calculations that rely on different scales for different values (e.g. velocity and wave-making resistance) and that require that the model and the target drastically diverge from one another with respect to at least some features (e.g. velocity). This kind of reasoning does not seem to proceed via merely proposing that the model and the target resemble one another in some relevant respects, with the model being accurate if they are so similar.